A software development methodology is a structured framework used to plan, manage, and control the process of developing an information system. It defines the roles, activities, and deliverables required to move a project from initial concept to final deployment and maintenance.

Software development methodologies: A complete guide from waterfall to AI-driven SDLC

23 minutes read

23 minutes read

Content

I suggest you know the principles and the definitions of the main methodologies that apply to software development. What will you be able to define them in the context of your teams, the specific codebases, projects, clients, and deadlines?

This guide is for technical decision-makers who need more than a summary or an abstract concept. You need to understand what are the common methods of software development, what each methodology actually costs, where it breaks, and how to construct a working hybrid before the project kick-off slide is even finished.

What is software development methodology?

Definition

Software design methodologies are structured systems of principles, practices, and roles that govern how a team moves from a problem statement to deployed, maintainable software. It determines how work is planned, how risk is managed, how progress is measured, and how teams communicate – both internally and with stakeholders.

Methodology is not process documentation. It’s the operating model underneath your process documentation.

Not sure if your current methodology is actually serving your team?

Business cost

Choose well, and your systems development methodology becomes an accelerant: faster decisions, cleaner handoffs, predictable delivery. Choose poorly or apply the right methodology in the wrong context, and you get the opposite: scope creep camouflaged by sprint ceremonies, compliance gaps that surface during audits, and a team running fast in the wrong direction.

Choosing wrong software development methods results in rework, attrition (developers leave dysfunctional processes), and strategic drift. A significant share of large software projects still fail or are substantially challenged, and methodology misalignment is consistently among the root causes.

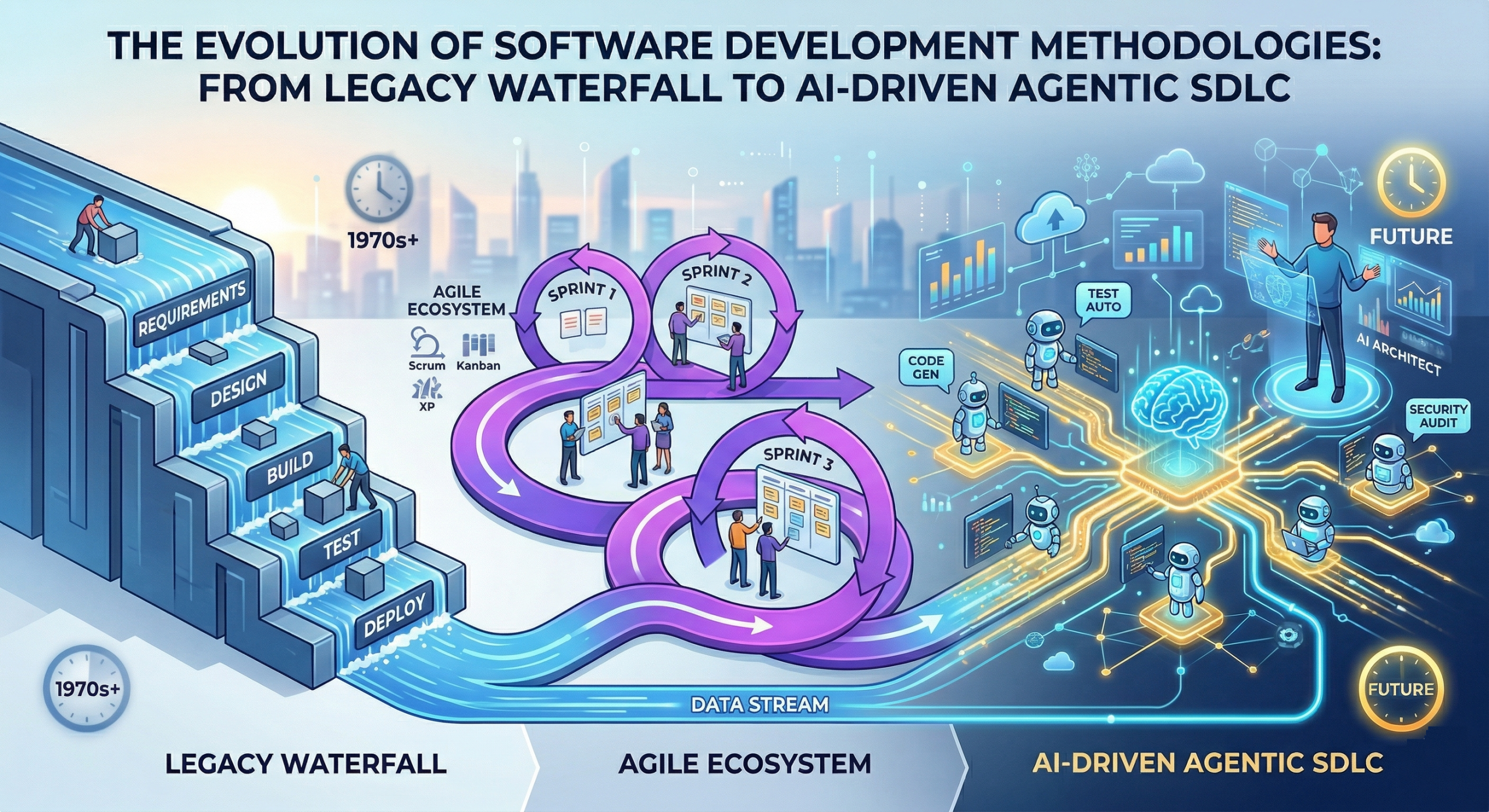

Evolution

Software development methodologies have evolved in response to repeated, expensive failures:

- 1950s-70s: No formal methodology, projects ran on individual heroics.

- 1970s-80s: Waterfall emerges from manufacturing and defense.

- 1990s: The complexity of commercial software exposed Waterfall’s rigidity, and iterative approaches began to appear.

- 2001: The Agile Manifesto, which became a cultural shift as much as a process shift.

- 2010s: DevOps, Lean, and scaling frameworks close the gap between development speed and deployment reliability.

- 2020s: AI enters the development loop – first as a code assistant, then as an agent capable of autonomous task execution. The SDLC is being restructured again.

Understanding this arc is very important because every development methodology carries assumptions baked in at its founding moment. The failures of the ancestors became the pillars for the new ones. Consequently, applying a 1970s methodology to a 2025 AI-native product team is not just inefficient, it’s structurally incompatible.

Linear and traditional methodologies: Waterfall, V-model, and spiral

These software development processes and methodologies remain relevant in specific, well-defined contexts, particularly where compliance, documentation, and predictability are more important than adaptability. Let’s take a closer look at each of the models.

Waterfall

What it is: Sequential development in discrete phases: Requirements → Design → Implementation → Testing → Deployment → Maintenance. Each phase must be formally completed before the next begins.

Where it works: Government contracts, defense systems, medical device software, and any domain where requirements are legally fixed before development starts. If a regulator needs a complete design specification before approving development, Waterfall provides that audit trail.

Where it doesn’t: Any product where market requirements, user behavior, or technology can shift mid-project. Which, in 2026, is most products.

Decision signal: If your requirements document has legal standing or regulatory approval before development begins, Waterfall deserves serious consideration. If it doesn’t, look elsewhere.

V-model

What it is: An extension of Waterfall that pairs each development phase with a corresponding testing phase. Design → Acceptance Test Plan. Architecture → System Test Plan. Every build artifact has a mirror test artifact created in parallel.

Where it works: Safety-critical systems (medical devices, aerospace, automotive software, industrial control systems). It aligns with IEC 62304, ISO 26262, and similar standards.

The real value: V-Model makes test coverage a first-class deliverable, not an afterthought. You cannot skip a testing phase without explicitly breaking a documented contract — which is exactly what regulators want to see.

Cost: High upfront planning overhead. Changes late in the cycle are expensive. Expect up to half of total project time to be spent in documentation before you write a single line of code.

Spiral

What it is: An iterative model built around risk management. Each spiral cycle involves: planning → risk analysis → engineering → customer evaluation. You spiral outward, progressively elaborating the system while explicitly managing risk at each turn.

Where it works: Large, complex systems with significant technical uncertainty: enterprise platforms, defense systems, novel engineering problems. It’s the methodology for when you genuinely don’t know what you don’t know.

The catch: It requires skilled risk analysts and disciplined management. Without that, it collapses into expensive, unstructured iteration. It’s also rarely used in its pure form as most teams adopt its risk-management principles into hybrid models.

Types of software development methodologies: Scrum, Kanban, XP, and FDD

Despite the common belief, Agile is not a methodology. It’s a set of values and principles. So, what are software development methodologies, what principles they embody, and how they operationalize them?

Scrum

What it is: A lightweight framework built around fixed-length iterations (Sprints, typically 2 weeks), three roles (Product Owner, Scrum Master, Development Team), and four ceremonies (Sprint Planning, Daily Standup, Sprint Review, Sprint Retrospective).

What it does well: Creates a regular cadence for decision-making and a clear accountability structure. The Product Owner role forces prioritization – someone has to decide what goes into the Sprint backlog.

What it does poorly: Scrum says almost nothing about engineering practices. Teams that adopt Scrum without accompanying technical discipline (automated testing, CI/CD, clean code practices) often end up with a faster way to produce technical debt.

Decision signal: Scrum fits product teams building customer-facing software with active stakeholder involvement. It struggles with maintenance-heavy teams, infrastructure work, and projects where the work is not easily decomposed into two-week chunks.

Talk to our delivery team

about what Agile looks like when it’s working properly.

Kanban

What it is: A flow-based system that visualizes work on a board (columns representing workflow stages), limits Work in Progress (WIP), and optimizes for continuous delivery rather than iteration cycles.

The core mechanism: WIP limits. If your In progress column has a limit of 3 and it’s full, no new work starts until something moves. This forces teams to finish work rather than start new work, which is a discipline most teams lack.

Where it excels: Operations teams, support engineering, maintenance work, and any context where work arrives unpredictably and can’t be pre-batched into sprints.

Decision signal: If your team is regularly context-switching between urgent production issues and planned work, Kanban or a Scrum-Kanban hybrid gives more realistic throughput visibility than sprint velocity metrics.

Extreme programming (XP)

What it is: An Agile methodology built around engineering excellence. Its practices include: Test-Driven Development (TDD), pair programming, continuous integration, small releases, collective code ownership, and on-site customer collaboration.

What distinguishes it: XP is the only major Agile framework that mandates specific technical practices. This is its strength. Teams running XP tend to produce more maintainable codebases because the methodology builds quality in at the practice level.

The cost: Pair programming and TDD require discipline and often feel counterproductive to developers new to them. Organizations that try to adopt XP without management buy-in for its productivity model (which looks slower in the short term) typically abandon it within months.

Decision signal: XP is worth serious consideration for teams building software that must remain maintainable for years – complex domain logic, high-change business rules, fintech, healthcare records systems.

Feature-Driven Development (FDD)

What it is: A model-driven, iterative methodology that organizes development around a feature list derived from a domain model. Key practices include: developing an overall model, building a features list, planning by feature, designing by feature, and building by feature.

Where it fits: Mid-to-large teams (20-200+ developers) building complex domain systems. FDD provides structure that Scrum lacks at scale without requiring the overhead of Waterfall. It was influential in the 2000s, and many of its patterns appear in scaled Agile frameworks today.

Practical note: FDD is rarely adopted wholesale in greenfield projects today, but its feature-centric decomposition approach is useful in any context where domain modeling is a prerequisite to development.

Scaling and efficiency methodologies: Lean, SAFe, LeSS, and RAD

Lean software development

What it is: An adaptation of Toyota Production System principles to software: eliminate waste, amplify learning, decide as late as possible, deliver as fast as possible, empower the team, build integrity in, see the whole.

The seven wastes in the software context: Partially done work, extra processes, extra features (over-engineering), task switching, waiting, motion (unnecessary handoffs), and defects.

Why it matters for decision-makers: Lean is less a methodology and more a diagnostic lens. Running a Lean analysis on your current process often reveals that half of the cycle time is waiting – for approvals, environments, reviews, and deployments. That’s recoverable time without changing your core framework.

Decision signal: If your team’s delivery speed is limited by process overhead rather than technical complexity, apply Lean principles before adopting a new framework. Fix the system, not the people.

Scaling Agile (SAFe, LeSS, DaAD)

When a single Agile team becomes ten teams building interdependent software, Agile’s team-level ceremonies break down. Three frameworks attempt to solve this:

SAFe (Scaled Agile Framework): The most comprehensive and most controversial. Adds Program Increment (PI) Planning, Agile Release Trains (ARTs), and multiple organizational layers. It imposes significant structure and ceremony overhead but provides clear accountability and portfolio-level visibility. Common in large enterprises, financial institutions, and government agencies running 500+ engineers.

LeSS (Large-Scale Scrum): Takes the opposite approach: apply Scrum at scale with as little additional process as possible. One Product Backlog, one Product Owner, multiple teams. Less overhead than SAFe, but requires significant organizational discipline.

DaAD (Disciplined Agile at scale): A context-sensitive toolkit rather than a prescriptive framework. It maps decisions to contexts and lets teams choose practices based on their situation. More flexible than SAFe, more structured than LeSS.

How to decide: SAFe if you need audit trails and portfolio management for executives. LeSS if you want minimal overhead and strong Scrum discipline. DaAD, if your organization has enough process maturity to make context-driven choices without a prescriptive map.

Rapid Application Development (RAD)

What it is: A methodology prioritizing speed through prototyping, user feedback loops, and reuse of existing components. Requirements and design phases are compressed; the prototype is the specification.

Where it works: Internal tools, MVPs, proof-of-concept projects, and any situation where time-to-first-feedback matters more than architectural elegance.

The risk: RAD prototypes have a tendency to become production systems before anyone intended them to. “We’ll clean it up later” is one of the most expensive phrases in software development.

Decision signal: RAD is legitimate for bounded, low-stakes applications. Build in an explicit architectural review gate before any RAD prototype crosses into production infrastructure.

DevOps, DevSecOps, and platform engineering

DevOps

What it is: A cultural and technical movement that merges development and operations responsibilities, automates the software delivery pipeline, and enables continuous deployment. It’s not a framework, but a set of practices and a cultural posture.

The three ways: Flow (optimize delivery from dev to ops), Feedback (amplify signals from ops back to dev), Continuous Learning (build a culture of experimentation and improvement).

What it actually requires: Organizational restructuring. DevOps fails when it’s implemented as a tooling project (we bought Jenkins / GitLab CI / GitHub Actions) without changing who owns production reliability. The tools are the easy part.

Decision signal: If development teams deploy to production and own what they deploy, you have the cultural foundation for DevOps. If operations is a separate gating function that approves deployments, you have Waterfall with better tools.

DevSecOps

What it is: DevOps with security integrated at every pipeline stage – shift-left security. Threat modeling in design, SAST/DAST in CI pipelines, dependency scanning, secrets management, infrastructure-as-code security scanning, and runtime protection.

Why it’s no longer optional: The average time-to-exploit for a disclosed CVE has dropped to days. Security reviews at the end of a release cycle are too slow. DevSecOps is the architectural response.

Practical implementation: Start with the highest-leverage gates — dependency scanning (Snyk, Dependabot), secrets scanning (GitGuardian, GitHub Advanced Security), and container image scanning. Don’t try to implement the full security pipeline in one quarter.

Decision signal: If your organization handles customer PII, financial data, or operates in a regulated industry, DevSecOps is a compliance and risk management requirement, not a nice-to-have.

Platform engineering

What it is: Building and maintaining an internal developer platform (IDP) – a self-service layer of tools, templates, and automated workflows that lets product teams deploy, monitor, and scale applications without deep operations expertise.

The business case: Developer cognitive load is a real throughput constraint. When every team maintains its own Kubernetes configuration, CI pipeline, secrets management, and observability stack, you have N teams solving the same problems N times. Platform engineering centralizes that into a product that other teams consume.

The investment: Platform engineering requires treating your internal platform as a product – with a roadmap, SLOs, and dedicated engineers. Organizations that treat it as DevOps team doing extra work see a very low adoption.

Decision signal: If you have more than 5 product teams and significant time is being spent on infrastructure undifferentiated from team to team, Platform engineering has a calculable ROI.

FinOps-integrated development

What it this software development method: The practice of embedding cloud cost awareness into the development workflow – not as a post-deployment audit, but as a design and sprint-level constraint.

Why it matters now: Cloud-native architectures can generate cost surprises that dwarf the salary cost of the engineers who built the feature. A poorly tuned data pipeline, an unoptimized ML inference endpoint, or a misconfigured auto-scaling group can produce a five-figure monthly bill from a single sprint’s work.

In practice: Cost tagging in infrastructure-as-code, cost estimates in architectural decision records (ADRs), per-team cloud spend dashboards visible at the sprint level, and engineering involvement in reserved capacity planning.

Decision signal: If your cloud bill is growing faster than your revenue or user base, FinOps integration in development is the diagnostic starting point.

AI-augmented software development

Productivity gains, risks, and governance in AI-driven development

What it is: The use of AI models as active participants in the development cycle – code generation, code review, test generation, documentation, architectural analysis, and bug investigation.

Current state: AI coding assistants (GitHub Copilot, Cursor, JetBrains AI) are in production use across most technology organizations. Studies from Google, Microsoft, and academic institutions consistently show productivity gains of 20-55% on well-scoped coding tasks, with higher gains on boilerplate-heavy work and lower gains on complex algorithmic problems.

The governance gap: AI-generated code requires the same or even stricter review standards as human-generated code. Organizations that have relaxed code review rigor on AI-generated output are accumulating subtle bugs and security vulnerabilities that are harder to detect precisely because the code looks polished.

Decision signal: Establish AI code contribution policies before widespread adoption, not after. Define what AI tools are approved, what data can be sent to external models, and what review standards apply to AI-generated code.

Read also: AI data governance: Everything technology leaders need to know

Vibe coding and prompt-first development

What it is: A development mode where developer describes intent in natural language and iterates on AI-generated implementations, spending minimal time writing code directly.

The honest assessment: Vibe Coding is highly effective for prototyping, internal tooling, and well-understood problem domains. It has significant limitations for systems requiring deep domain knowledge, complex architectural decisions, or security-sensitive implementations. AI can generate a payment processing endpoint; whether it correctly handles idempotency, race conditions, and PCI scope is a different question.

Organizational risk: Junior developers who learn primarily through Vibe Coding may develop gaps in foundational understanding that become visible only under operational pressure – incidents, debugging sessions, architectural trade-offs.

Decision signal: Vibe Coding belongs in the prototyping and internal tooling category. Production systems require engineers who understand what the code does, regardless of who or what generated it.

Low-code and no-code in enterprise development

What it is: Platforms such as Retool, OutSystems, Mendix, Microsoft Power Platform, Bubble enable application development with minimal hand-coding – configuration-based UI building, workflow automation, and data integration.

Where it belongs: Internal operational tools, business process automation, reporting dashboards, approval workflows, and any application where the primary challenge is configuration rather than algorithmic complexity.

The enterprise reality: Low-code platforms work best at the edges of your technology stack, not at the core. They create problems when used to build systems with complex business logic, high transaction volumes, or strict performance requirements. They also create vendor lock-in that can be expensive to exit.

Green software engineering

What it is: The practice of designing software to minimize energy consumption and carbon footprint at the algorithmic, infrastructure, and architectural levels.

Why is it mentioned in the methodology conversation: The EU Corporate Sustainability Reporting Directive (CSRD) and similar regulations are pushing software’s energy footprint into ESG reporting scope. More directly, efficient code is also faster and cheaper to run which is exactly the point where the business case and the environmental case intersect.

In practice: Profiling energy consumption, preferring efficient algorithms over raw compute scaling, right-sizing cloud resources, choosing data center regions by carbon intensity, and making architectural choices (edge vs. cloud, batch vs. real-time) with energy cost as an explicit variable.

Decision signal: If your organization has ESG commitments or operates under sustainability reporting requirements, Green Engineering practices need to be present in your SDLC documentation. Even without regulatory pressure, computing efficiency has direct cost implications.

Software methodology comparison

| Methodology | Best fit | Risk profile | Team size | Change tolerance | Compliance readiness |

| Waterfall | Fixed-requirement, regulated | Low process, high change risk | Any | Very low | High |

| V-Model | Safety-critical systems | Very low process, high change risk | Mid- Large | Very low | Very high |

| Spiral | High-uncertainty, complex systems | Moderate | Mid- Large | Moderate | Moderate |

| Scrum | Product development, SaaS | Moderate | Small- Mid (5-9 per team) | High | Low- Moderate |

| Kanban | Ops, support, maintenance | Low | Any | Very High | Low |

| XP | Long-lived, complex domain software | Low (with discipline) | Small | High | Moderate |

| Lean | Process optimization | Low | Any | High | Low |

| SAFe | Large enterprise, regulated at scale | High overhead | Large (100+) | Moderate | High |

| DevOps | Cloud-native, continuous delivery | Moderate | Any | Very high | Moderate |

| DevSecOps | Regulated, security-sensitive | Moderate | Any | High | Very high |

| Platform Eng. | Multi-team organizations | High investment | Large | High | Moderate |

| AI-Augmented | Modern product teams | Tooling/governance risk | Any | Very high | Low (evolving) |

When software methodologies fail: Common red flags and how to spot them

If the methodology failed, it means some patterns were missed. These patterns tend to accumulate quietly, and usually arise from the sprint reviews nobody attends, architectural decisions made before the first ticket was written, or Confluence pages that describe a process the team stopped following months ago. Three patterns described below are the most common for organizations of different sizes. To have the ability to identify them directly means the ability to avoid them and to ensure the success and longevity of your project.

Zombie Scrum

If you have regular standups, the board full of columns, and regular retrospectives in your schedule, but nothing changes from sprint to sprint — the backlog grows without meaningful prioritization, retrospective action items carry forward indefinitely, and the Product Owner is either absent or functionally a ticket-taker rather than a decision-maker.

This situation is called Zombie Scrum – when Scrum is performed as a ritual rather than practiced as a system for delivering value. The root cause is almost always organizational. Scrum was adopted as a process without establishing the conditions it requires, such as an empowered Product Owner with genuine authority over scope, meaningful stakeholder participation in sprint reviews, and management trust in the team’s capacity to self-organize.

To fix this, you don’t need more Scrum. You actually need to stop running all these actions and define whether the foundational commitments are in place. Who owns the product? What does Done look like for this particular team? What is the team accountable for delivering, and to whom? Until those questions have real answers, you will not reach any measureable effect on outcomes.

Water-Agile-Fall

The Water-Agile-Fall pattern usually occurs like this: the development team runs sprints, and a backlog, a Scrum Master, and velocity metrics are in place. And yet requirements arrive as fully-specified documents after months of business analysis. Architecture is decided in a steering committee before development begins. The release date was fixed in a contract signed a year ago and cannot be moved regardless of what the team learns in the interim.

This pattern happens when Agile is adopted at the team level without any corresponding change to how the organization makes commitments. The team is iterating inside a waterfall container. The sprints are real, but there is no actual agility.

It’s a particularly expensive failure mode because it produces the overhead of both approaches without the benefits of either. The team carries the cost of Agile processes, while the organization carries the rigidity cost of sequential planning. Escaping it requires addressing the structures that sit above the development team: contract models, budget cycles, governance gates, and the tolerance for scope to evolve mid-project. Until those changes, asking the team to be more Agile is asking them to run faster inside a locked room.

The tooling trap

When engineers spend a significant amount of time configuring and maintaining the toolchain rather than building a product, the tools become the work.

The tooling trap springs when organizations mistake purchasing decisions for process decisions. Tools amplify existing processes – functional ones and dysfunctional ones equally. A planning process that produces unclear requirements will produce unclear Jira tickets. A deployment process with too many approval gates will reproduce those gates in the CI/CD pipeline. The tool makes the dysfunction more visible and more expensive, but it does not resolve it.

The discipline required before adding any new tool is the same in every case: define the process the tool is meant to support, and identify the specific, measurable signal that will tell you whether it is working. If neither of those things can be articulated clearly before the procurement decision, the tool is being used to avoid a harder organizational conversation, not to enable a better one.

How to choose a software development methodology: a step-by-step framework

Step 1: Map your constraints – budget, timeline, and compliance

Start with the things you can’t change. Regulatory requirements, contractual structures, compliance standards, and hard deadlines are constraints, they can not be negotiated.

To define your constraints, ask these three questions:

- What does our compliance environment require? (SOC 2, ISO 27001, HIPAA, FedRAMP, FDA, CE marking – each has methodology implications.) If certification requires complete design documentation before build, Waterfall or V-Model is not optional.

- What does our budget cycle look like? Annual fixed budgets favor phase-gate approaches. Rolling quarterly budgets enable iterative methodology.

- What is the actual deadline structure? Is there a hard external deadline (regulatory submission, contract delivery, market launch)? Or is the deadline a planning assumption? The answer changes the trade-off between speed and quality.

Step 2: Assess your team’s maturity, technical practices, and existing stack

Methodology choice is bounded by what your team can actually execute. It’s about your current capabilities, not about your goals or aspirations.

Team maturity signals:

- Can your team decompose a feature into testable increments? (Required for Scrum, XP)

- Do engineers own production reliability, or does a separate ops team? (Required for DevOps)

- Is there a functioning code review culture? (Required for any methodology to maintain quality)

- Can your team run a sprint without PM intervention on daily decisions? (Required for genuine Agile)

Tech stack signals:

- Automated test coverage below 40% makes continuous deployment risky regardless of methodology.

- Manual deployment processes cap delivery frequency – a DevOps aspiration without deployment automation is performance without substance.

- Legacy monoliths change the economics of iterative delivery – factor in refactoring cost when choosing a methodology.

Step 3: Design your hybrid methodology

Most organizations run a textbook methodology. The difference hides in the definition of your principled hybrid.

A principled hybrid is one where:

- The core delivery model is clearly defined (Scrum for product teams, for example)

- Supplementary practices are explicitly chosen for specific needs (Kanban for ops, V-Model documentation for compliance artifacts, DevSecOps pipeline for all teams)

- Trade-offs between frameworks are documented and understood

- The hybrid is reviewed and adjusted as the organization scales

An unprincipled hybrid is what you get when teams adopt practices opportunistically, abandon them when they create friction, and can’t articulate why they do what they do. Document your methodology stack the same way you document your architecture. It should be a living decision record, not an onboarding slide.

Conclusion

Methodology debates often generate more heat than light because they’re proxies for deeper organizational questions: Who owns decisions? How much uncertainty can we tolerate? What are we actually optimizing for?

What separates organizations that get the methodologies and tooling question right from those that don’t is not the methodology itself but the questions they ask before choosing, and whether they had the discipline to evaluate and adjust after.

At Blackthorn Vision, we help teams design and implement systems development methods that match their actual constraints, not their aspirational ones. If you’re evaluating a methodology change, a scaling challenge, or building a platform engineering function, let’s have a direct conversation about your context.

FAQ

What is a software development methodology?

How do I choose the right methodology for my project?

The choice depends on several factors:

- Project Size & Complexity: Agile is great for complexity; Waterfall is better for simple, fixed scopes.

- Regulatory Requirements: Highly regulated industries (like MedTech) often require the documentation-heavy V-Model or Waterfall.

- Team Expertise: Modern methodologies like DevSecOps require a high level of technical maturity.

- Time to Market: If speed is the priority, Lean or AI-driven methodologies are usually the best choice.

Can you combine different methodologies?

Yes, this is known as a Hybrid Methodology. Many organizations use a “Water-Agile” approach—applying Waterfall for high-level planning and budgeting, while using Agile/Scrum for the actual execution and development of the software.

What is "Platform Engineering" and how does it fit in?

Platform Engineering is a methodology focused on building internal developer platforms (IDPs). It aims to reduce the cognitive load on developers by providing self-service tools and automated infrastructure, effectively acting as an evolution of DevOps for larger enterprises.

How does AI affect modern development methodologies?

AI has introduced the Agentic SDLC, where AI agents assist in automated code generation, real-time bug detection, and predictive project management. This allows teams to move away from rigid manual “sprints” toward a more fluid, continuous stream of AI-assisted development.

Agile vs. Waterfall: What is the main difference?

The primary difference lies in the approach to change and sequence:

- Waterfall is a linear, sequential approach where each phase (requirements, design, etc.) must be completed before the next begins. It is best for projects with fixed requirements.

- Agile is iterative and incremental. It focuses on continuous feedback and allows for changes at any stage of the development process.

Which software development methodology is most popular today?

As of 2026, Agile (specifically Scrum) remains the most widely used methodology due to its flexibility. However, it is increasingly being integrated with DevOps practices and AI-augmented workflows to accelerate delivery cycles and improve code quality.