PyTorch in virtually all cases. The clear exceptions are a specific edge or mobile deployment requirement where TFLite is mandatory, a TPU-native infrastructure where JAX’s performance advantage is real and accessible, or an inherited TFX pipeline where the migration cost to PyTorch would be prohibitive. Outside these scenarios, choose PyTorch and do not second-guess it.

PyTorch vs TensorFlow: What’s the difference and which one wins

15 minutes read

15 minutes read

Content

For years, the AI community operated under the following fiction: PyTorch was the scrappy researcher’s tool, TensorFlow was the enterprise production workhorse, and never the twain shall meet. That binary is now definitively dead.

The ecosystem has undergone a tectonic realignment. PyTorch has achieved near-hegemony in both research and production. TensorFlow and its Keras 3 successor have pivoted to a compelling multi-backend “Switzerland” positioning. JAX has emerged as a high-performance challenger backed by Google’s own Gemini infrastructure.

This guide offers clear definitions of what is PyTorch and TensorFlow, as well as walks you through TensorFlow vs PyTorch genuine strengths, financial implications, and a scenario-based decision flowchart you can actually use.

PyTorch: Why do we call it a king of ecosystem

PyTorch is an open-source machine learning framework developed by Meta’s AI Research lab (FAIR), released in 2016. It provides a dynamic computation graph (also called “define-by-run” or “eager execution”), meaning the graph is built on-the-fly as your Python code runs, rather than being compiled ahead of time. This makes it feel like standard Python and makes debugging intuitive. At its core, PyTorch offers: Tensors (multi-dimensional arrays with GPU support), autograd (automatic differentiation for gradient computation), and torch.nn (a module system for building neural networks). It runs on NVIDIA CUDA, AMD ROCm, Apple MPS, and Intel XPU hardware.

PyTorch’s ascendancy is the compounding result of architectural decisions made in 2016-2017 that proved prescient for the transformer era. The dynamic computation graph was initially mocked as unscalable. It turned out to be exactly what researchers needed when the field pivoted from CNNs to attention mechanisms, where control flow is often data-dependent.

Today, PyTorch powers over 70% of papers on arXiv and virtually every frontier model lab: OpenAI, Meta AI, Mistral, Stability AI, and Cohere all build on PyTorch as their primary framework.

What is “Hugging Face” and what effect does it have on PyTorch?

Hugging Face is an AI company that built the dominant open-source ML tooling hub. Its Hub hosts over 500000 pre-trained model checkpoints, virtually all as PyTorch files. Its libraries (transformers, diffusers, peft, accelerate) are the standard toolkit for modern NLP, computer vision, and generative AI work. Think of it as the npm registry + GitHub for ML models, with first-class PyTorch integration throughout.

No single factor has done more to entrench PyTorch than the Hugging Face ecosystem. With 500000+ models distributed as PyTorch checkpoints, there is now a self-reinforcing network effect that is structurally difficult to disrupt.

- Transformers library: The de facto standard for NLP, vision, and multimodal models – PyTorch-first by design

- PEFT library: Parameter-efficient fine-tuning (LoRA, QLoRA, prefix tuning) – PyTorch native

- Accelerate: Distributed training abstraction that scales from laptop to 8,192 GPUs seamlessly

- Diffusers: The definitive library for diffusion model inference and training

- Datasets: Standardized data pipelines deeply integrated with PyTorch DataLoaders

Hardware-aware compilation: torch.compile

Torch.compile (introduced in PyTorch 2.0) is a JIT (Just-In-Time) compiler that traces your model’s compute graph and uses TorchInductor as a backend to generate optimized CUDA/C++ kernels. JIT compilation means converting Python code to optimized machine code at runtime rather than ahead of time. XLA (Accelerated Linear Algebra) is a domain-specific compiler for linear algebra (originally Google’s) that PyTorch can also target via torch_xla.

Together, these tools close much of the performance gap between PyTorch’s flexible eager mode and TensorFlow’s traditionally graph-optimized execution.

The Developer Experience (DX) advantage

Eager execution implies that operations run immediately when called, like standard Python. You can inspect any tensor’s value at any line of code, use native print() and pdb for debugging, and write data-dependent if/for control flow naturally. Graph mode (TF 1.x’s original model) requires you to first define a computation graph, then execute it in a “session”, which is powerful for optimization but deeply hostile to interactive debugging. TensorFlow 2.x adopted eager execution by default, largely in response to PyTorch’s superior developer experience.

PyTorch’s mental model maps directly onto standard Python. Debugging a PyTorch model means using pdb, setting breakpoints, inspecting tensors with .shape and .grad – tools every Python developer already knows.

TensorFlow and Keras 3: The “Switzerland” among frameworks

TensorFlow is an open-source ML framework developed by Google Brain and released in 2015. It was originally built around static computation graphs (you define the full graph first, then execute it) which enabled aggressive compiler optimizations but made debugging painful.

TensorFlow 2.x (2019) adopted eager execution by default and reorganized around Keras as its primary high-level API. TensorFlow’s production ecosystem includes TF Serving (model deployment server), TFX (ML pipelines), TFLite (edge/mobile inference), TF.js (browser inference), and tight integration with Google Cloud’s Vertex AI platform.

TensorFlow was one of last year’s strategic reinventions. The narrative that “TensorFlow is dead” is both wrong and unhelpfully reductive. What has died is its claim to research dominance. What has survived and thrived, is its production infrastructure and, most importantly, Keras 3.

The Keras 3.0: From introduction to revolution

Keras was originally a high-level neural network API designed to be user-friendly and modular. From 2019-2023, it was tightly bundled with TensorFlow as tf.keras. Keras 3.0 (late 2023) is a complete re-architecture: it is now a backend-agnostic deep learning library that can execute on TensorFlow, PyTorch, or JAX interchangeably. You select your backend via an environment variable (KERAS_BACKEND=torch). A single Keras 3 model can be trained on PyTorch for research, deployed via TensorFlow for production, and benchmarked with JAX for performance, from the same Python code.

This is arguably the most underrated development in the 2024-2025 framework landscape.

For organizations currently on TF 2.x with significant Keras usage, migrating to Keras 3 with the PyTorch backend offers the lowest-risk path to accessing the PyTorch ecosystem while preserving existing model investments. This is the migration path that minimizes technical debt write-offs.

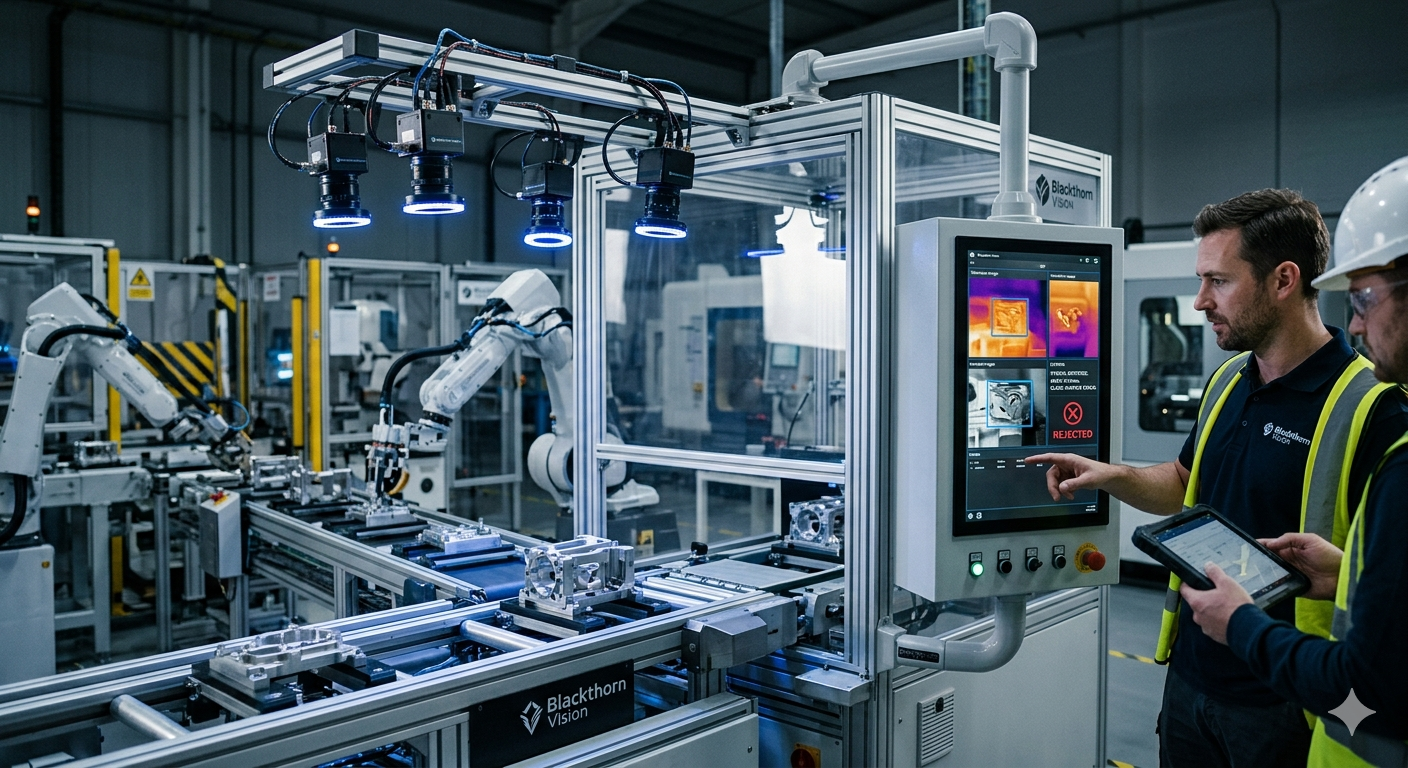

TFX and Production-Grade MLOps

MLOps (Machine Learning Operations) is the discipline of deploying, monitoring, and maintaining ML models in production, analogous to DevOps for software. It covers data pipelines, model versioning, performance monitoring, retraining triggers, and governance.

TFX (TensorFlow Extended) is Google’s end-to-end MLOps platform built on TensorFlow. It provides modular components: ExampleGen (data ingestion), StatisticsGen (automated data profiling), Transform (feature preprocessing with lineage), Trainer (training with checkpointing), Evaluator (validation gates), and Pusher (deployment). The ML Metadata store tracks full artifact lineage – critical for regulated industries.

TFX remains the most complete end-to-end ML pipeline framework available. For organizations requiring rigorous data validation, lineage tracking, and schema enforcement, TFX provides capabilities with no direct equivalent in the PyTorch ecosystem. PyTorch teams typically assemble equivalent functionality from multiple third-party tools (MLflow, Evidently AI, Great Expectations), incurring both integration cost and maintained surface area.

Edge & Mobile: TensorFlow’s unbeatable advantage

TFLite (TensorFlow Lite), now rebranded as LiteRT, is a lightweight runtime for deploying ML models on mobile (Android/iOS), embedded Linux, and microcontrollers. It supports quantization (reducing model precision to int8/float16 for speed and size), hardware acceleration via GPU Delegates (Adreno, Mali, Apple GPU), and NNAPI (Android Neural Networks API). TF.js runs TensorFlow models directly in web browsers using WebGL or WebGPU. TFLite Micro runs on microcontrollers with as little as 16KB of RAM. Collectively, LiteRT runs on over 4 billion devices worldwide.

- TFLite Micro: Runs on microcontrollers with as little as 16KB of RAM, no OS required

- GPU Delegate: Hardware-accelerated inference on Adreno, Mali, and Apple GPUs

- TF.js: Native browser inference with WebGL/WebGPU backends

- MediaPipe: Pre-built on-device ML pipelines for vision and audio tasks

- NNAPI: Android Neural Networks API integration for mobile SoC accelerators

TensorFlow/TFLite is the clear choice for edge deployment. PyTorch Mobile exists but has significantly less production adoption. ExecuTorch (Meta’s new edge runtime) was promising but early-stage as of 2025.

The third player – JAX

JAX is not a framework in the traditional sense. JAX (Just After eXecution) is a Google Research library that brings composable function transformations to NumPy-compatible Python code. It is not a framework in the traditional sense, it is a functional transformation engine built on top of XLA. Its four core primitives are: jit (compile a function to fast XLA code), grad (compute gradients via automatic differentiation), vmap (vectorize a function across a batch dimension, or “auto-batching”), and pmap (parallelize across multiple devices/TPUs). These four primitives can be arbitrarily nested and composed, which is JAX’s key differentiator and the source of its extraordinary performance ceiling.

Beyond deep learning: JAX’s scientific computing advantage

Automatic differentiation is the algorithmic technique for computing exact derivatives (gradients) of any computable function, not just hand-coded mathematical expressions. All three frameworks implement autodiff – the computational engine behind backpropagation in neural networks.

JAX’s autodiff is notable because it can differentiate through arbitrary Python control flow, work in higher-order settings (gradient of a gradient), and compose with its other transformations (e.g., take the gradient of a vmapped function). This makes it powerful for scientific applications like physics-informed neural networks (PINNs), optimal control, and molecular dynamics – domains where you need to differentiate through a simulation.

- Physics simulations: Brax (rigid body), MuJoCo JAX, FEniCS-JAX (finite element methods)

- Probabilistic programming: NumPyro, BlackJAX, Pyro-ppl (JAX backend)

- Molecular dynamics: AlphaFold 2 & 3 are both JAX-native

- Reinforcement learning: PureJaxRL achieves 1000× speedup by running entire RL loops on-device

- Quantum computing: PennyLane, Qiskit JAX backend for quantum ML

Functional Programming vs. Objects: The paradigm shift

Functional programming is a paradigm where functions are pure (no side effects – same input always produces same output) and data is immutable (you never modify in place; you return new values). JAX requires this because XLA’s compiler needs to fully trace a function’s computation graph, which is impossible if arbitrary Python state can be mutated mid-trace.

In practice: no a += b (use a = a + b), explicit PRNG key splitting instead of global random state, and model parameters stored as explicit dictionaries (“pytrees”) rather than object attributes. The payoff: JAX functions that pass the purity constraint can be JIT-compiled, vectorized, and distributed with a single decorator — a capability no other ML framework offers.

| Concept | PyTorch Approach | JAX Approach |

| Model state | nn.Module attributes (mutable) | Explicit pytree / parameter dict (immutable) |

| Training loop | loss.backward(), optimizer.step() | jit(update_fn)(state, batch) |

| Random numbers | Global RNG state | Explicit PRNG key splitting |

| In-place ops | Supported (a += b) | Forbidden — use a = a + b |

| Debugging | pdb + print tensors | Challenging under jit |

The Gemini pedigree

TPUs (Tensor Processing Units) are Google’s custom ASICs (Application-Specific Integrated Circuits) designed specifically for matrix multiplication – the core operation of neural networks. They differ from GPUs in their programming model: TPUs execute compiled XLA programs rather than CUDA kernels. JAX compiles to XLA natively, giving it a structural advantage on TPUs that CUDA-based frameworks (PyTorch, TF on GPU) cannot fully replicate.

Google’s Gemini, PaLM 2, and Gemma models are all trained in JAX on TPU pods at scales involving thousands of chips simultaneously. For organizations with GCP TPU access, the JAX + TPU combination can deliver 2-5 times more throughput per dollar over GPU equivalents on large-scale training.

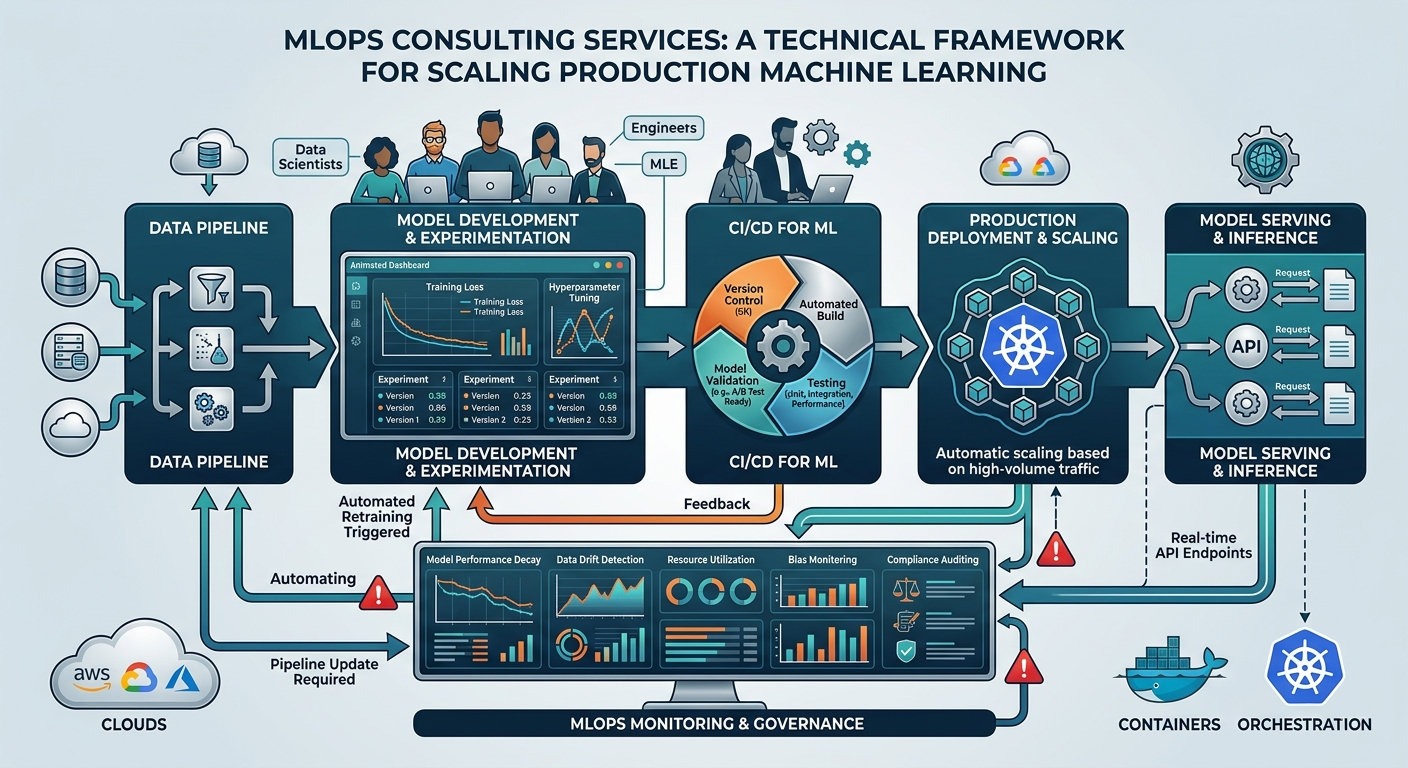

PyTorch vs TensorFlow: Financial and infrastructural considerations

Framework selection has direct and material financial implications across cloud infrastructure, talent markets, and long-term technical debt. Engineering leaders who treat PyTorch and TensorFlow comparison as a purely technical discussion are leaving significant value on the table.

On the talent side, the labor market has moved decisively toward PyTorch. Survey data from the 2024 Stack Overflow and Kaggle ML surveys show PyTorch at 60–70% of primary framework usage. JAX expertise commands a 15-25% salary premium. Most university ML programs now teach PyTorch as the primary framework. Hence, the contractor availability ratio is 5-8 times higher for PyTorch than TF or JAX.

| Migration path | Timeline | Eng-months | Risk |

| TF 1.x → TF 2.x / Keras 3 | 3–6 months | 8–15 | Medium |

| TF 2.x → PyTorch | 6–12 months | 20–40 | High |

| PyTorch → JAX | 4–8 months | 15–25 | High |

| Any → Keras 3 (multi-backend) | 2–4 months | 5–12 | Low-Medium |

Decision-making process: Three most common scenarios

TensorFlow and PyTorch have both very distinctive strengths and application area. Therefore, framework selection should be driven by your specific constraints and objectives. Here are the three most common decision contexts:

Scenario A: Series A founder building an AI writing assistant for legal teams

You have closed your Series A, signed enterprise pilot customers, and need to ship a working product before the pilot window closes. Your team is small, two or three strong Python developers who have not trained a model before. Every week of ramp-up time is a week of runway without progress visible to customers.

The base models you will build on (Llama 3, Mistral, or a legal-domain variant) are all PyTorch checkpoints on Hugging Face. The fine-tuning libraries (PEFT for LoRA, Accelerate for scaling, Transformers for the model architecture) are PyTorch-native throughout. The inference server you will most likely deploy – vLLM – is PyTorch-native. The typical workflow is: pull a checkpoint, apply LoRA fine-tuning on your annotated legal corpus, evaluate against attorney feedback, iterate, then ship via vLLM.

A developer fluent in this cycle can move from experiment to deployed feature in under two weeks.

Scenario B: Running AI infrastructure at a healthcare insurer with 50 million patient records

You operate under HIPAA, state insurance regulations, and an internal compliance function that reviews every new system before it touches patient data. Your models inform prior authorization decisions and fraud detection – processes where a drifting model does not frustrate a user, it denies a patient medically necessary care.

Tensor Flow makes those deployments auditable and defensible under regulatory scrutiny. Its ExampleGen and StatisticsGen components automatically profile incoming data against defined schemas, flagging drift before it reaches the model. The Evaluator enforces validation gates – a model version that does not meet pre-defined fairness and accuracy thresholds cannot be pushed to production. The ML Metadata store maintains a traceable chain from raw data to model decision, which is what a regulator will ask for.

Assembling this from PyTorch third-party tools is possible but leaves you integrating and maintaining a bespoke compliance stack with no single owner when something goes wrong during an audit.

For any new greenfield project that does not touch existing infrastructure, Keras 3 with a PyTorch backend is a legitimate architecture. It gives you access to the modern model ecosystem without forcing a migration decision on production systems that are working reliably.

Scenario C: Training neural surrogates for climate simulation at 1km resolution

Your models are not just fitting to observed data, they are constrained by the governing equations of fluid dynamics, thermodynamics, and atmospheric chemistry. The gradients must flow through the physics, not just around it. This is where JAX‘s capabilities become crucial.

JAX’s grad can differentiate through arbitrary Python control flow, including iterative solvers and conditional branches that appear inside a simulation loop. Its vmap vectorizes naturally across the spatial grid, parallelizing computation over thousands of grid points simultaneously.

The problem requires differentiating through physical constraints in ways other frameworks handle awkwardly.

PyTorch vs TensorFlow: Which one to choose and when

The “TensorFlow or PyTorch framework war” formulation was always somewhat misleading, and it is even more so in 2026. The real question is not which framework wins but which framework is structurally aligned with your team’s constraints, objectives, and ecosystem dependencies.

The TensorFlow vs PyTorch practical guidance distills to three clean directions:

- Default to PyTorch for any new deep learning, NLP, or computer vision project. The ecosystem gravity is overwhelming and only continues to compound.

- Maintain TF investments; use Keras 3 as the bridge. Choose proactively for edge/mobile, enterprise MLOps pipelines, and regulated environments.

- Invest in JAX intentionally when you need TPU-scale performance, scientific computing integration, or novel algorithm development. Budget honestly for the paradigm shift.

Choose deliberately. Learn the key difference between TensorFlow and PyTorch. Budget honestly for the hidden costs. And revisit this decision annually as the landscape moves fast enough that last year’s calculus may not survive contact with this year’s ecosystem.

The frameworks themselves are converging on shared standards (ONNX, SafeTensors, StableHLO) that will make the ecosystem less fragmented over time. If you need a consultant or a technical partner able to lead you to the right solutions, TensorFlow vs PyTorch comparison, and any other detail – contact us!

FAQ

What should I choose – PyTorch or TensorFlow – for the fastest ROI of my new AI project?

What’s the difference between PyTorch and TensorFlow in context of reinforcement learning?

PyTorch historically dominated applied reinforcement learning research, and it remains the right default for most commercial RL applications. The tooling (Stable Baselines 3, CleanRL, RLlib) is mature, well-documented, and PyTorch-native.

However, JAX has made significant inroads specifically in high-performance RL, where the ability to run entire training loops on GPU or TPU hardware delivers speedups of up to 1000 times over CPU-based approaches. For research-grade RL or applications requiring very high training throughput, JAX’s would serve better.

Is Keras now a separate framework or just a part of TensorFlow?

Keras 3 is now architecturally independent from TensorFlow. It is a standalone interface that can use TensorFlow, PyTorch, or JAX as its underlying computation engine, similar to how a word processor can save files in multiple formats without being owned by any of them.

From 2019 to 2023, Keras was bundled with TensorFlow and distributed as part of it. That coupling has been severed and now you can install Keras 3 independently and choose your backend at runtime.

Do I need to worry about JAX advantage?

For most commercial AI applications, this advantage is irrelevant. It becomes real in scientific computing, simulation-based optimization, and very large scale training on TPU infrastructure.