While DevOps focuses on the continuous development and deployment of code, MLOps adds data and models to the mix. In MLOps, you aren’t just tracking code changes; you are tracking “training-serving skew,” data drift, and model decay. If the data changes, the software performance changes—even if the code remains the same.

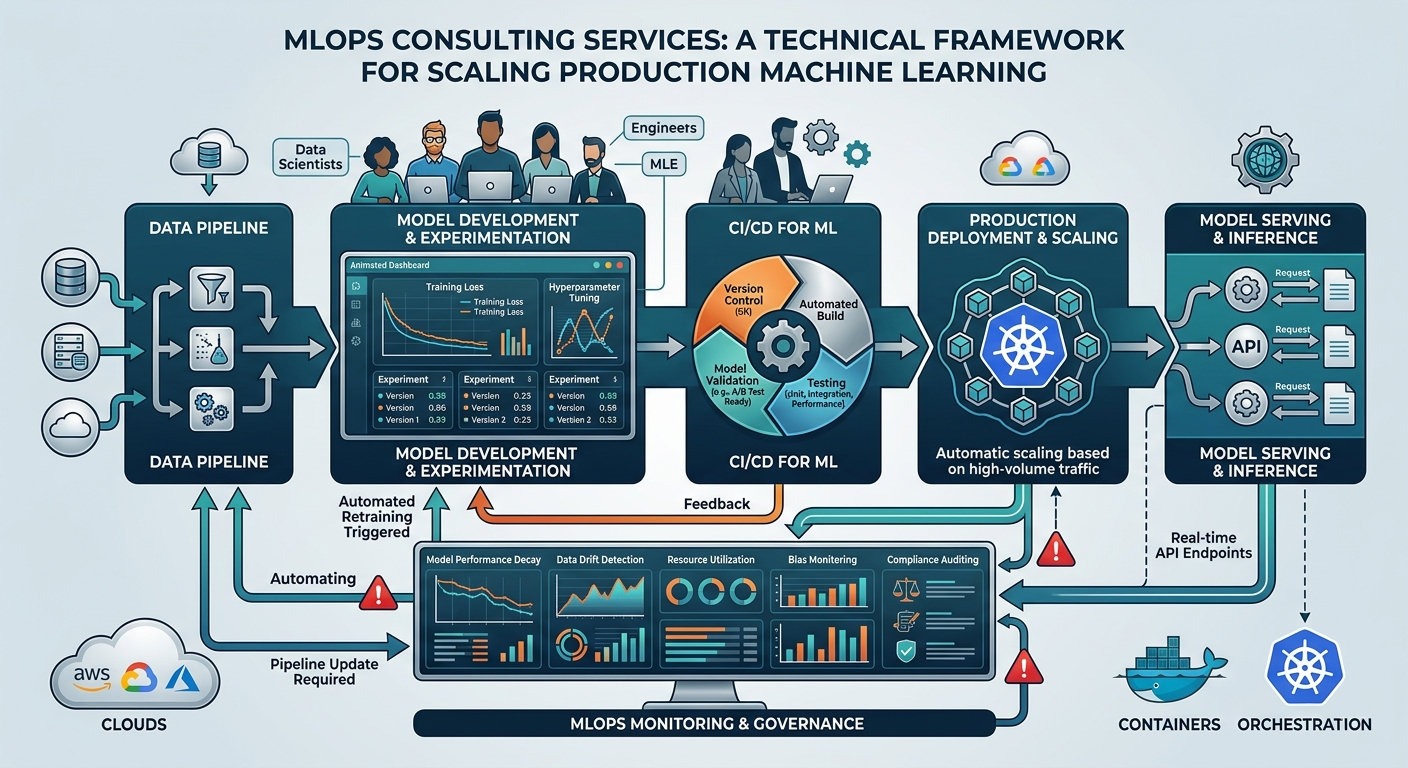

MLOps consulting services: A technical framework for scaling production machine learning

16 minutes read

16 minutes read

Content

Sooner or later, every engineering organization running machine learning faces the dilemma: build the infrastructure to operationalize these models in-house, or bring in people who have experience doing this. The hesitation is not baseless – according to statistics, every second AI project fails to make it from prototype to production, and badly set infrastructure – pipelines, deployment processes, monitoring, and governance – is the reason.

This guide breaks down what MLOps consulting actually delivers, how to assess where your organization stands today, and how to structure an engagement that produces infrastructure your team owns long-term.

What are MLOps consulting services?

MLOps – short for Machine Learning Operations – applies the principles of DevOps to the machine learning lifecycle. Where DevOps manages software releases, MLOps services manage the full pipeline from raw data to a model serving predictions in production.

MLOps consulting services are engagements in which external specialists design, build, or improve the pipeline for your organization. The scope typically spans:

- Infrastructure architecture: selecting and configuring the tools that run your pipelines

- Pipeline automation: eliminating manual steps in training, validation, and deployment

- Monitoring and observability: detecting when model performance degrades before it affects business outcomes

- Governance and compliance: ensuring models are auditable, reproducible, and aligned with regulatory requirements

MLOps consulting is not data science. MLOps services consultants in this space don’t generally build your models; they build the systems that make those models reliable, repeatable, and maintainable at scale.

Why does it matter to the business? Without MLOps infrastructure, every new model deployment is a manual, high-risk event. Teams re-solve the same engineering problems repeatedly, models drift silently in production, and the cost of each iteration compounds. A well-structured MLOps practice turns one-off deployments into a repeatable capability.

The MLOps maturity model: Assessing your infrastructure

Before choosing tools or hiring consultants, you need an honest read on where your organization stands at the moment. The MLOps maturity model provides that baseline. It describes three levels of operational sophistication, each with a distinct cost profile, risk exposure, and ceiling on how fast your team can ship.

You define what level our business actually needs, and what the cost of staying where you are at the moment is.

Level 0: Manual

At Level 0, ML workflows are largely script-driven and human-dependent. Data scientists train models locally, hand off artifacts to engineering teams, and deployments happen through ad hoc processes, often involving manual file transfers, undocumented environment configs, and tribal knowledge.

Characteristics:

- No pipeline automation; each training run is triggered manually

- Models are rarely retrained after initial deployment

- No systematic monitoring for drift or degradation

- Experiment tracking, if it exists, lives in spreadsheets or notebooks

- Deployment is a one-off event, not a repeatable process

Level 0 is viable for organizations running one or two low-stakes models infrequently. It becomes a liability the moment you need to update models regularly, operate multiple models in parallel, or demonstrate reproducibility for audit or compliance purposes. The hidden cost is engineer time, as every deployment pulls senior people into manual coordination work.

Level 1: Automated pipeline

At Level 1, the training pipeline is automated end-to-end. Data ingestion, preprocessing, training, evaluation, and model registration happen without manual intervention, triggered either on a schedule or by data events. The model artifact that enters production is the direct output of a versioned, reproducible pipeline.

Characteristics:

- Automated retraining triggered by schedules or data thresholds

- Feature stores or structured feature engineering pipelines are in place

- Experiment tracking with versioned datasets, parameters, and metrics

- Model registry manages artifact lineage and promotion gates

- Monitoring covers basic performance metrics and data distribution shifts

Level 1 is a target for most production ML teams. It removes the bottleneck of manual retraining, reduces deployment risk through reproducibility, and gives leadership visibility into model performance over time. Most organizations working with an MLOps consultant are moving from Level 0 to Level 1. This transition delivers the highest return relative to investment.

Level 2: CI/CD automation

Level 2 extends automation into the deployment and validation layer itself. Model updates move through a formal CI/CD pipeline: automated testing, staged rollouts (canary or blue/green deployments), and rollback triggers based on live performance signals. The pipeline itself –not just the model – is under version control and continuously tested.

Characteristics:

- Full CI/CD pipeline for both model code and infrastructure

- Automated A/B testing and staged deployment with statistical guardrails

- Shadow mode and canary deployments to validate models before full traffic exposure

- Automated rollback on performance degradation signals

- Pipeline components are modular, reusable, and independently deployable

Level 2 of MLOps services is appropriate for organizations where ML is a core product differentiator, where the cost of a bad model update is high, and deployment frequency is weekly or faster.

A consultant’s first job is typically to place your organization accurately on this model. Not where you aspire to be, but where your processes, tooling, and team actually operate today. That audit prepares the basis and defines the direction for everything that follows.

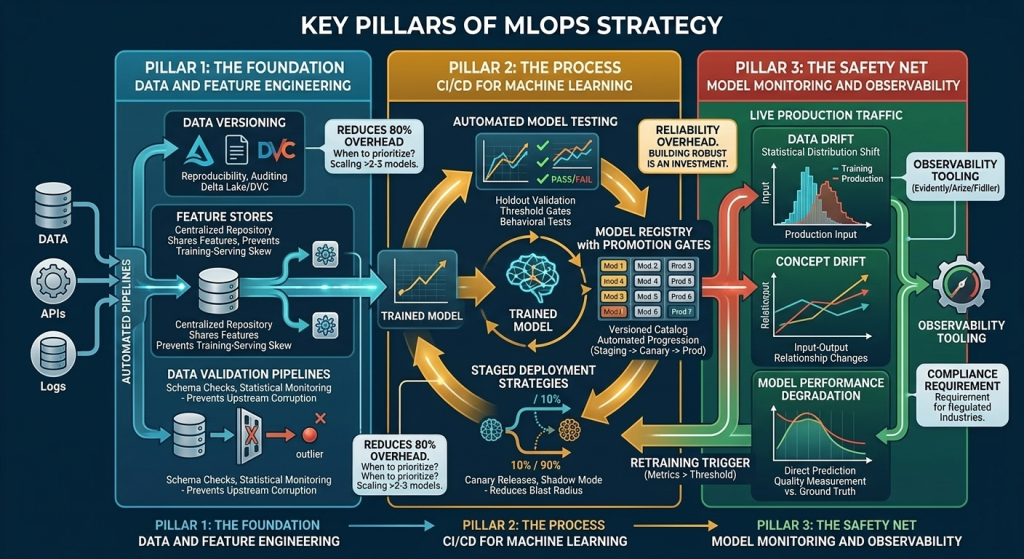

Key pillars of MLOps strategy

A durable MLOps strategy rests on three functional areas. Each one is addressable independently, but most effective when designed together. Gaps in any one of them tend to surface as production failures, cost overruns, or compliance exposure.

The foundation: Data and feature engineering

This pillar covers how raw data is ingested, transformed, validated, and made available for training and inference – consistently, at scale, and with full lineage.

The core components:

- Data versioning: Tracking which dataset a model was trained on, so results are reproducible and audits are traceable. Tools like DVC or Delta Lake handle this at the storage layer.

- Feature stores: Centralized repositories where engineered features are computed once and shared across models. This eliminates redundant computation and, more importantly, prevents training-serving skew: the condition where features computed differently at training time versus inference time silently degrade model performance.

- Data validation pipelines: Automated schema checks and statistical distribution monitoring that catch upstream data quality issues before they reach model training. Without this, a schema change in a source system can corrupt a retraining run with no visible error.

Feature engineering typically consumes up to 80% of a data scientist’s time on any given project. A well-architected feature store with reusable, validated features compresses that overhead significantly on every subsequent model. It also reduces the risk that two teams independently compute the same feature differently – a common source of inconsistent model behavior across products.

When to prioritize this first? When your organization runs more than two or three models, or if different teams share data sources, invest in this layer before scaling anything else.

The process: CI/CD for machine learning

Software CI/CD automates the path from code commit to production deployment. ML CI/CD does the same, but the artifact being tested and deployed is a trained model, not a software binary. That distinction introduces complexity that standard DevOps pipelines don’t account for.

A production-grade ML CI/CD pipeline includes:

- Automated model testing: Beyond unit tests on code, this means validation against holdout datasets, performance threshold gates, and behavioral tests (does the model still perform adequately on known edge cases?).

- Model registry with promotion gates: A versioned catalog where models move through stages (staging, canary, production) based on automated evaluation criteria, not manual approval alone.

- Staged deployment strategies: Canary releases expose new models to a fraction of live traffic before full rollout. Shadow mode runs a new model in parallel with the production model, comparing outputs without serving them to users. Both reduce the blast radius of a bad model update.

- Automated rollback: Predefined performance thresholds that trigger an automatic reversion to the previous model version if a new deployment degrades key metrics.

Manual deployment processes are slow and error-prone. More critically, they don’t scale. Each new model or update cycle requires the same engineering coordination overhead. CI/CD automation converts deployment from a project into a routine operation, compressing cycle time and freeing engineering capacity for higher-value work.

There is a trade-off: building a robust ML CI/CD pipeline requires upfront investment in the form of several weeks of engineering time and meaningful infrastructure cost.

The safety net: Model monitoring and observability

A model that performs well at launch will not necessarily perform well six months later. Data distributions can shift, user behavior changes frequently, and upstream systems evolve. A systematic monitoring allows you to spot model degradation before it surfaces as a business problem, reflecting conversion rates, fraud losses, and the amount of customer complaints.

A production monitoring stack addresses three distinct failure modes:

- Data drift: The statistical distribution of input features in production diverges from the training distribution. The model is being asked to make predictions on data it hasn’t seen before.

- Concept drift: The relationship between inputs and the correct output changes over time. A fraud detection model trained pre-pandemic may classify post-pandemic transaction patterns differently than a human reviewer would.

- Model performance degradation: Direct measurement of prediction quality against ground truth labels, where ground truth is available with an acceptable lag.

Observability tooling – platforms like Evidently, Arize, or Fiddler – automates the detection of these signals and routes alerts to the appropriate teams before degradation becomes a customer-facing event.

Monitoring infrastructure is also the mechanism by which retraining is triggered in a mature pipeline. Rather than retraining on a fixed schedule, a well-instrumented system retrains when drift metrics cross defined thresholds, reducing unnecessary compute while ensuring models stay current.

For organizations operating in regulated industries such as financial services, healthcare, and insurance, model monitoring logs are increasingly a compliance requirement. Regulators expect evidence that model behavior is being tracked over time and that degradation triggers a defined response process.

These three pillars are highly interactive. A feature store without data validation is a single point of failure. A CI/CD pipeline without monitoring has no feedback loop. Monitoring without reproducible pipelines can detect a problem, but can’t efficiently fix it. The MLOps as a service consultant’s role is not assembling them as independent tools, but designing these components as a system.

Why hire an MLOps consultant?

Most engineering teams can build MLOps infrastructure. The main question is whether the time, iteration cost, and organizational risk of building MLOps solutions from scratch are the right use of your team’s capacity. Let’s take a look at the problem and the solution.

4 out of 5 ML models never reach production

The models stall in production pipelines for predictable reasons: infrastructure that wasn’t designed for ML workloads, deployment processes that require too much manual coordination, monitoring gaps that make stakeholders reluctant to trust live model outputs, and data pipelines that work in a notebook but break under production load.

These are solved problems. The organizations that have crossed this threshold have left behind reusable patterns, tooling decisions, and failure postmortems. Consultants carry that accumulated knowledge into your environment, which is the core of the value proposition.

Production-ready templates for quicker deployment

A consultant engagement typically begins with infrastructure that already works – pipeline templates, infrastructure-as-code modules, CI/CD scaffolding – adapted to your stack rather than built from zero. That starting point changes the timelines.

An internal team building the same MLOps solutions infrastructure for the first time will spend lots of time on tooling evaluation, integration failures, and undocumented edge cases. A consultant who has implemented the same patterns across multiple environments has already absorbed that cost.

Who benefits the most?

- Organizations with a hard deadline on a model launch

- Teams where data scientists are currently absorbing infrastructure work

- Businesses that have already attempted an MLOps build internally and stalled

Cost efficiency issue

Inefficiently run ML workloads cost the company a lot. Training jobs, inference endpoints, and data processing pipelines consume compute continuously, and default configurations rarely focus on cost optimization.

Two levers can change the situation:

- Auto-scaling inference: Serving infrastructure that scales down during low-traffic periods and scales up on demand, rather than maintaining peak-capacity instances continuously.

- Spot instance orchestration: Routing fault-tolerant training jobs to preemptible compute at a fraction of on-demand pricing, with automatic checkpointing to handle interruptions.

Infrastructure inefficiency is one component; another is engineer time. When senior engineers spend cycles on manual deployment coordination or debugging undocumented pipeline failures, that cost doesn’t show up in a cloud bill, but it exists.

Consultant-built infrastructure that your team doesn’t have to maintain at the plumbing level returns that capacity to product work.

Selecting the right stack: Tools and technologies

The right stack is the one your team will actually maintain, not the one with the most promising capabilities. A consultant’s role in this phase is to match tooling to your team’s existing skills, your deployment environment, and your operational capacity. Then, to document the decision rationale so that future choices are made consistently. Let’s take a look at different groups of tools.

Orchestration

Orchestration tools manage the sequencing, scheduling, and retry logic of pipeline steps – training runs, data validation jobs, model evaluation, and deployment triggers.

- Apache Airflow remains the default for organizations already running data engineering pipelines. Familiarity and ecosystem breadth are its primary advantages; ML-native features are limited.

- Kubeflow Pipelines is built for Kubernetes-native environments and handles ML-specific concerns (GPU scheduling, artifact lineage) more naturally. Higher operational overhead to run and maintain.

- Prefect and Dagster offer a middle path. They are more developer-friendly than Airflow, but less infrastructure-intensive than Kubeflow. Both have grown their ML ecosystem integrations meaningfully in recent years.

Tracking

Experiment tracking tools log parameters, metrics, and artifacts across training runs, making results reproducible and comparable.

- MLflow is the most widely deployed open-source option. It integrates with most major frameworks and cloud platforms, and its model registry provides a lightweight promotion workflow.

- Weights & Biases (W&B) offers a richer interface and stronger collaboration features, with a managed hosting option that reduces operational burden. Better fit for teams running high volumes of experiments where visualization and sharing matter.

- Neptune.ai occupies similar territory to W&B with a focus on metadata management at scale.

Serving

Model serving infrastructure handles inference – receiving requests, running predictions, and returning results at the latency and throughput your application requires.

- BentoML and Ray Serve both support multi-model serving and are framework-agnostic, making them well-suited for organizations running heterogeneous model types.

- TorchServe and TensorFlow Serving are tightly coupled to their respective frameworks – lower configuration overhead if you’re standardized on one, a constraint if you’re not.

- Managed options – SageMaker Inference, Vertex AI, Azure ML endpoints – trade configuration flexibility for operational simplicity. Often, the right call for teams without dedicated MLOps engineering capacity.

Implementation roadmap: How to start

Step 1: Discovery and audit

Before introducing any tooling, a consultant needs an accurate picture of your current state. This phase produces a baseline assessment, not a sales pitch for a larger engagement.

What gets examined:

- Existing data pipelines – how data moves from source systems to model training, where manual steps live, and where failures occur

- Current deployment process – how models reach production today, who is involved, and how long it takes

- Monitoring and alerting coverage – what is currently measured, what is invisible

- Team structure and skill distribution – who owns what, where capacity constraints are, and where infrastructure work is currently absorbing data science time

Step 2: MVP pipeline setup

The MVP phase of MLOps consulting services focuses on the highest-leverage improvements, typically automating the training pipeline, introducing experiment tracking, and establishing a model registry with basic promotion gates. The goal is a repeatable, documented path from data to a deployed model, replacing the most manual and error-prone steps in your current process.

Typical deliverables:

- Automated retraining pipeline connected to your data sources

- Experiment tracking configured and integrated with existing workflows

- Model registry with staging and production environments

- Basic data validation checks at pipeline entry points

- Deployment automation for at least one production model

It’s not a complete Level 2 CI/CD system. Shadow deployments, canary releases, and full monitoring coverage come later.

Step 3: Scaling and governance

With a working baseline in place, this phase extends coverage and adds the controls required by regulated industries and larger engineering organizations.

Scaling priorities:

- Expanding pipeline automation to additional models and teams

- Implementing auto-scaling inference and spot instance orchestration for cost efficiency

- Adding staged deployment strategies (canary, shadow mode) where model update risk justifies the infrastructure

- Building out drift detection and automated retraining triggers

Governance priorities:

- Model lineage documentation – full traceability from raw data to production artifact

- Access controls and audit logging on the model registry and pipeline actions

- Incident response runbooks for model degradation events

- Knowledge transfer – ensuring your internal team can operate, extend, and debug the infrastructure without ongoing consultant dependency

At the close of this phase, your team should be able to retrain and deploy a model without external involvement, diagnose a monitoring alert without escalating to the consultant, and onboard a new model to the pipeline without rebuilding infrastructure from scratch.

Summary and next steps

MLOps consulting delivers the most value when it’s scoped as an infrastructure investment, not a service dependency. The organizations that get the most out of these engagements treat the consultant as a builder and knowledge transfer partner, leaving with systems their teams own and can extend, not a black box that requires ongoing external maintenance.

If your company is evaluating MLOps infrastructure investment, the right starting point is a structured audit of your current state. Blackthorn Vision has hands-on experience providing MLOps consulting services. We implement MLOps pipelines across most industries, including regulated ones such as finance and healthcare. We are experienced with every step, from Level 0 to production-grade CI/CD systems.

We help you assess where you are, scope what’s worth building, and deliver infrastructure your team will own long after the engagement ends.

FAQ

What is the difference between DevOps and MLOps?

Does MLOps replace Data Scientists?

Not at all. MLOps empowers Data Scientists. By automating the “plumbing” (data ingestion, infrastructure provisioning, and monitoring), Data Scientists can focus on what they do best: building better algorithms and extracting insights, rather than managing server clusters.

How do we measure the ROI of MLOps consulting?

Success is typically measured by four key metrics:

- Time-to-Market: How quickly can a new model go from an idea to a production API?

- Reliability: Reduction in system downtime caused by data or model errors.

- Scalability: The ability to manage 100 models with the same head-count previously required for 5.

- Compliance: Having a clear audit trail for data usage and model decisions.

Which cloud providers or tools do you support in this framework?

A robust technical framework is usually cloud-agnostic. Whether your stack is built on AWS (SageMaker), Google Cloud (Vertex AI), Azure ML, or open-source tools like Kubeflow and MLflow, the core principles of data versioning, feature stores, and model monitoring remain the same.

How does MLOps help in reducing Technical Debt?

Technical debt in ML often manifests as “spaghetti” data pipelines or undocumented manual steps. A technical framework introduces Automated CI/CD for ML, which ensures every model is versioned, reproducible, and tested before hitting production. This prevents the “black box” syndrome where only one data scientist knows how a model works.

At what stage of growth should a company invest in MLOps consulting?

You should consider MLOps services when you move from “Experimental ML” (notebooks and manual deployments) to “Production ML.” If your team is spending more than 30% of their time manually fixing pipelines or if you have no clear way to audit why a model made a specific prediction, it’s time to implement a formal framework.