A proof of concept delivers a technical result in six to twelve weeks. A focused production deployment typically reaches measurable ROI within six to twelve months of go-live, depending on implementation cost, workflow value, and adoption rate. Broader programmes spanning multiple use cases operate on a twelve-to-twenty-four-month horizon.

AI software development services: A complete guide for business leaders and decision-makers

31 minutes read

31 minutes read

Content

Artificial Intelligence software development services are everywhere now. Organisations across industries are using AI to save costs, make better decisions, offer more personalized user experience, and lift as much operational burden from humans’ shoulders as possible. To succeed with AI integration, the winning organizations are making deliberate and well-sequenced investments with the right technical partners – the companies developing AI technology.

This guide is written for business leaders who need a clear, practical understanding of AI services and what professional AI development looks like. What services exist, how projects get built, what they cost, and what separates a well-executed implementation from a costly hype-led initiative. Whether you are taking your first step towards an AI initiative or planning the next phase of a broader transformation, you will find this guide extremely useful. It brings you clarity, offers practical examples, and answers the most common questions about AI software development services we hear from our clients every day.

AI: Current state and how it used to be

To begin, let’s analyze how AI capabilities have changed during the last decade. Earlier, systems were narrow: trained to perform one task in one context, reliable within those boundaries, and useless outside them. Today’s AI is different. Modern models reason across domains, process combinations of languages and images, generate novel outputs, and adapt to context in ways that earlier systems could not approach.

Consequently, AI has moved from a specialist tool to a general-purpose capability. The one who develops AI applies it to operations, customer experience, product development, risk management, and across industries. LLMs matured, cloud computing became more affordable, and the barrier to building AI-powered systems has dropped dramatically, especially with the emergence of developer-friendly infrastructure that makes deployment realistic for any organisation with the right partner.

Benefits of an early AI application

This shift in AI development solutions created competitive pressure. Organisations that effectively deploy AI are cutting costs in isolated workflows and building structural advantages that grow and expand over time.

- An AI-powered pricing engine improves as it processes more transactions.

- A customer service model becomes more capable as it handles more conversations.

- A fraud detection system sharpens as it encounters more patterns.

Early movers to Artificial Intelligence software development services accumulate data, model performance, and organisational capability that late movers cannot easily replicate by simply investing more money later.

The value proposition

Across sectors and use cases, AI delivers business value through four primary mechanisms.

- Cost reduction through intelligent automation of high-volume, repetitive tasks – document processing, customer query handling, compliance review, data classification – where organisations consistently report reductions in targeted workflows.

- Revenue growth through personalisation, sharper demand forecasting, and more effective customer engagement. AI-driven recommendation engines alone lift average order value by 15-30% at mature deployments.

- Better decisions made faster and grounded in data, no human team could analyse at scale, from supply chain optimisation to clinical risk stratification.

- New products and capabilities that were simply not possible before the introduction of AI application development services: conversational interfaces, generative tools, and computer vision systems that open revenue lines competitors cannot easily replicate.

The key to success is not an AI implementation alone

It’s in an understanding of where to invest first, in what order, and how to build a working long-term strategy.

AI consulting: A step-by-step approach to maximum efficiency

The most common and most expensive mistake in AI is building in a rush – having a vision and an urge to bring it to life immediately, without any strategy or previous evaluations. The pressure to demonstrate progress can push CEOs toward prototypes and pilots before the strategic groundwork is in place to ensure those efforts point in the right direction.

Good AI consulting is a part of AI software development services that solves this problem. It helps you make sure technical investment is aimed at the right problems, sequenced correctly, and grounded in an honest assessment of what the organisation can actually execute.

AI readiness assessment

A readiness assessment answers whether the organisation has what AI software solutions require to succeed. The audit covers data (Is there sufficient, clean, accessible data for the intended use case?), infrastructure (Are the systems and cloud environments in place to support AI workloads?), organisational capability (Is there internal literacy and leadership commitment to see a project through?), and regulatory exposure (Which compliance obligations govern what can and cannot be built?). Identifying gaps at this stage of Artificial Intelligence software development services is far cheaper than discovering them mid-project.

Use-case identification and prioritisation

Most organisations can generate a long list of potential AI applications quickly. Things get more complicated when it comes to prioritisation. Consultants work with business stakeholders to surface candidates across functions, then score them against business impact, technical feasibility, implementation risk, and strategic fit. The output is a ranked portfolio: high-value, achievable projects for immediate investment, a medium-term pipeline, and longer-horizon bets to pursue once foundational capability is established. This prevents the common failure of chasing a single high-visibility project while neglecting the quick but highly important wins.

ROI modeling

Before engineering resources are committed, a rigorous ROI model translates technical ambitions into financial language. It quantifies the full cost across the project lifecycle – discovery, development, infrastructure, integration, deployment, and ongoing operations – and projects the expected return: cost savings, revenue uplift, and risk reduction. Critically, it surfaces and stress-tests the assumptions embedded in that return. A project that looks attractive under optimistic assumptions may look very different when data quality, adoption rate, and performance benchmarks are sensitised against comparable implementations.

Roadmapping the AI development services

The roadmap synthesises the assessment, prioritised use cases, and ROI models into a sequenced execution plan. It organises investments into three horizons: quick wins in the first six months that demonstrate tangible ROI and build confidence; core transformation initiatives over the following year; and longer-term bets that could redefine competitive position once foundational capability is in place. It also specifies the enabling investments for data infrastructure, governance frameworks, and training programmes that underpin everything built on top. This document should be revisited quarterly as the AI evolves and the organisational knowledge base grows.

AI software development services

A capable AI development partner offers a range of services that address different stages and types of need, from strategic advice through to the engineering, deployment, and long-term operation of production systems. The most common are the following.

Custom AI software development

Custom AI software development is the end-to-end process of designing, building, and deploying applications where machine learning is central to how the system works. It’s not a bolt-on feature, but the core engine. Unlike off-the-shelf tools, a custom system is trained on your data, built around your workflows, and integrated with your existing technology. It covers the full stack: data pipelines, model development, application logic, and production infrastructure.

This is the right path when no existing product fits your requirements, when proprietary data is a genuine source of advantage, or when the problem is so new that packaged solutions for its resolution do not exist.

Product AI development service

When the goal is not internal use but a product to sell or license to others, the engineering requirements are significantly higher. External products demand multi-tenant architectures, polished user experiences, self-service onboarding, billing infrastructure, and model performance robust enough to handle the unpredictable inputs of real customers at scale.

Beyond engineering, AI product development encompasses the commercial layer: positioning, pricing model design, and go-to-market readiness. Technical partners with experience in both AI engineering and product commercialisation are rare and incredibly valuable at this stage.

AI consulting services

Advisory engagements help organisations make better AI decisions without necessarily building anything. In practice, this ranges from a focused architecture review (a few weeks, a specific technical question, a clear recommendation) to an extended strategic engagement embedded with leadership to develop an AI strategy, govern vendor selection, and design governance frameworks.

The highest-leverage consulting work tends to happen at decision points: before committing to a technology stack, before signing an implementation contract, or after an AI initiative underdelivers and an objective diagnosis is needed.

Enterprise AI solutions

Large organisations have different requirements from smaller deployments. Those include thousands of concurrent users, stringent uptime SLAs, role-based access controls, integration with enterprise identity systems, comprehensive audit logging for compliance, and architectures that scale across departments without data leakage between them. Building for the enterprise context also means building for adoption – change management, phased rollouts, training programmes, and feedback mechanisms that ensure the technology is actually used by the people it was built for.

Generative AI software development service

Generative AI applications use large language models and related architectures to produce content (text, code, images, structured data) in response to prompts and context. Enterprise applications range from document drafting assistants and knowledge synthesis tools to AI coding copilots and customer-facing conversational interfaces. The dominant architectural pattern for grounding these systems in real organisational knowledge is Retrieval-Augmented Generation (RAG): rather than relying on a model’s general training, the system retrieves relevant internal documents at query time, dramatically reducing the risk of fabricated outputs. More advanced agentic systems – where the model can invoke tools, query databases, and execute multi-step workflows autonomously – represent the current frontier of what is being built in production.

AI integration ervices

Most organisations cannot, and should not, rebuild their technology stack to accommodate AI. Integration services connect AI capabilities to what already exists, pulling data from operational databases, data lakes, and third-party APIs, embedding AI features into existing applications through APIs and UI components, and connecting AI outputs to downstream business processes and ERP systems. The integration layer is often more complex than the AI itself, particularly in organisations with legacy infrastructure, and it deserves as much engineering rigour as the model development.

AI-powered analytics

AI-powered analytics shifts business intelligence from describing the past to predicting the future. Predictive models forecast demand, churn risk, equipment failure, and credit default, and feed those predictions directly into the systems that act on them. Prescriptive analytics goes further, recommending the best course of action given constraints: optimal pricing, routing, or resource allocation.

Anomaly detection learns the baseline of normal behaviour and flags genuine deviations – far more effectively than rule-based alert systems that generate constant false-positive noise. Natural language query interfaces allow non-technical users to ask data questions in plain English and get answers immediately, without waiting for an analyst.

Intelligent RPA bots

Traditional Robotic Process Automation follows rigid scripts that break when inputs vary or applications change. Intelligent RPA adds AI to make automation genuinely resilient. Document intelligence components extract structured data from variable-format documents such as invoices, contracts, or medical records, which standard RPA cannot parse.

ML-based decision components handle judgment calls that rule-based logic cannot make: classifying intent, scoring risk, and determining the right escalation path. The result is automation that handles a wider range of cases, degrades gracefully on edge cases rather than failing silently, and requires less maintenance as the surrounding environment changes.

PoC development

A Proof-of-Concept is a time-bounded experiment (typically four to eight weeks) designed to answer one high-uncertainty question before significant development investment is committed. Most often, the question is whether AI can actually solve the problem with the available data at the required performance level?

A rigorous PoC is not the same as a polished demo. It uses representative real data, evaluates model performance against pre-defined success criteria, and delivers an explicit recommendation. If the result is negative, it’s not necessarily a failure. It is the cheapest possible way to avoid a much larger investment in the wrong direction.

Natural Language Processing software

NLP systems read, understand, and act on human language – one of the most widely deployed categories of AI in production today. Document classification routes incoming emails, filings, and records to the right process without manual triaging. Information extraction pulls specific entities and relationships from free text: contract obligations, drug dosages in clinical notes, equipment identifiers in maintenance logs. Semantic search returns results based on meaning rather than keyword matching. Sentiment analysis monitors tone and opinion across customer feedback, social media, and employee communication at a volume that manual review cannot approach.

Recommendation engines

Recommendation engines surface the right product, content, or action for each user. They are among the highest-ROI AI investments in customer-facing applications. The foundational techniques are collaborative filtering (what did similar users engage with?) and content-based filtering (what shares attributes with what this user has liked?). Production systems typically combine both.

Modern engines add sequential modelling to capture intent within a session, real-time feature engineering to reflect what the user is doing right now, and multi-objective optimisation to balance relevance, diversity, and commercial goals. At scale, marginal improvements in recommendation quality compound into significant revenue impact.

AI accelerators

Experienced AI teams build and maintain reusable components such as data pipeline templates, fine-tuning frameworks, RAG architecture packages, evaluation harnesses, and monitoring dashboards. The goal is to reduce the time required to go from project start to production deployment.

Rather than rebuilding common patterns from scratch on every engagement, these accelerators significantly compress delivery timelines while improving initial quality, because the components have been stress-tested across multiple prior projects. When evaluating partners, asking specifically what accelerators they bring to a project is one of the most reliable indicators of delivery maturity.

Model training and support

General-purpose foundation models are a strong starting point, but organisations with proprietary data, domain-specific requirements, or strict performance thresholds benefit from custom model development. This covers the full training lifecycle: dataset curation, labelling quality assurance, fine-tuning using techniques like LoRA and QLoRA to adapt large models efficiently to specific domains, evaluation against domain benchmarks, and production deployment. Ongoing support then maintains performance over time by monitoring for drift, managing retraining cycles, and providing incident response when production behaviour changes unexpectedly.

Chatbot development

Modern enterprise chatbots, built on LLMs rather than decision trees, handle open-ended natural language, maintain context across a conversation, access live data through system integrations, and escalate intelligently to human agents. Customer-facing deployments routinely handle up to 60% of inbound contact volume autonomously. They assist in resolving queries, processing requests, and updating records without human intervention.

Internal knowledge assistants reduce the time employees spend searching for information and the burden on subject matter experts answering repetitive questions. Beyond the model, robust chatbot development requires careful design of scope boundaries, escalation logic, safety guardrails, and conversation logging infrastructure for compliance and continuous improvement.

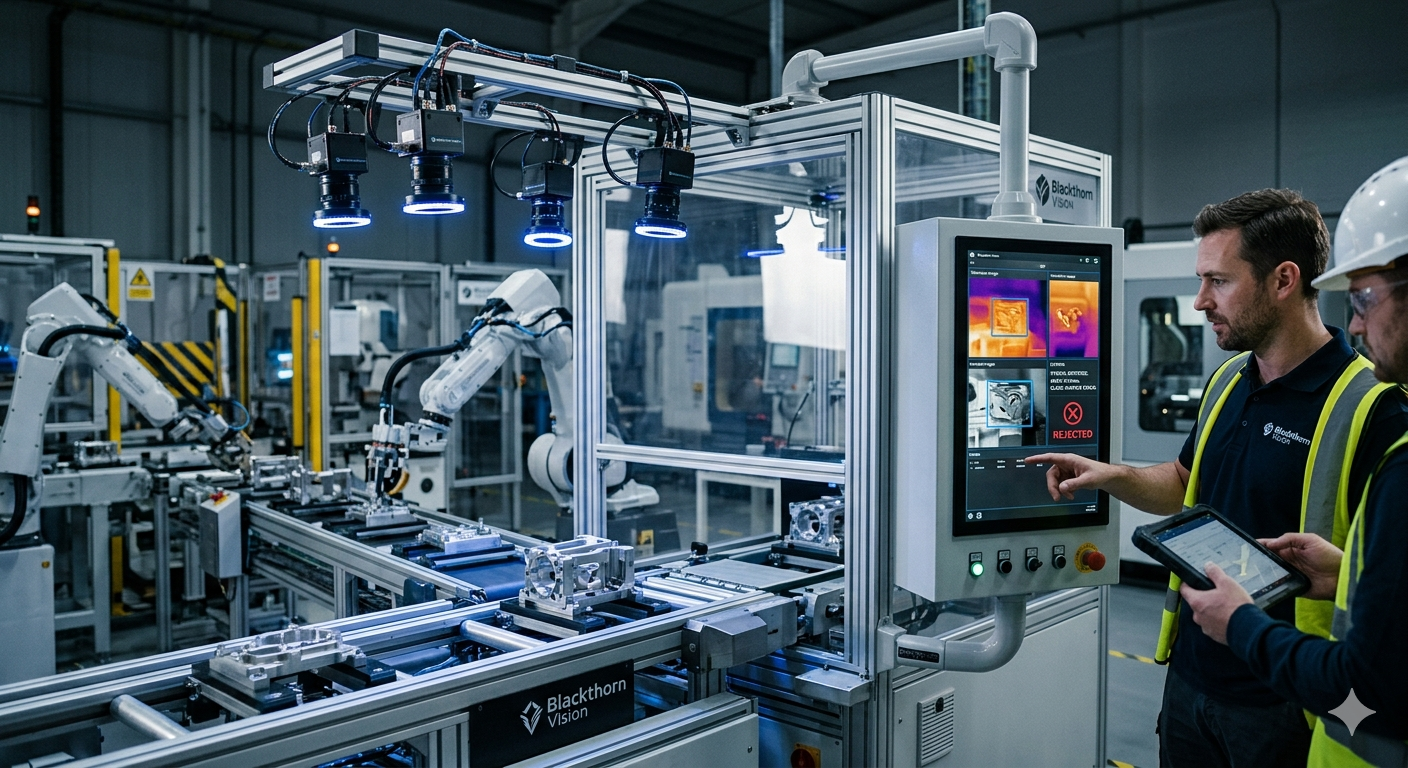

Computer vision systems

Computer vision applies AI to images and video, enabling machines to detect, classify, and measure what they see. Industrial quality inspection systems identify surface defects, dimensional deviations, and assembly errors on production lines at speeds no human inspector can match, while generating granular data that feeds back into manufacturing process improvement.

Medical imaging AI, for example, flags abnormalities in radiology and pathology scans for clinician review, prioritising urgent cases and producing measurements more consistent than manual assessment. Beyond inspection, computer vision powers retail analytics, document and text recognition, security monitoring, and agricultural sensing – any domain where consistent visual assessment at scale creates value.

Industry-specific AI software development solutions

The highest-value AI implementations are built around the specific data, workflows, regulatory environments, and competitive dynamics of a particular industry. Domain expertise shortens delivery timelines and reduces the risk of building systems that fail compliance requirements or miss performance benchmarks that only practitioners would anticipate.

Healthcare

Healthcare AI software development service spans the full clinical and administrative stack: diagnostic imaging models that flag abnormalities for clinician review, NLP systems that extract structured data from clinical notes and discharge summaries, patient risk stratification models that predict readmission or deterioration, and prior authorisation automation that processes insurance requests in minutes. The regulatory bar is high with the FDA clearance in the US and CE marking in Europe, and clinical validation requirements demand engineering rigour that general-purpose teams often underestimate.

Blackthorn Vision case study: Selux Diagnostics

We partnered with Selux Diagnostics, a US biotech company developing a rapid antibiotic susceptibility testing system – a direct response to the global antimicrobial resistance crisis. The team built the Site Software platform that integrates Selux’s laboratory instruments with hospital information systems, embedding machine learning algorithms that convert raw instrument measurements into clinically actionable results.

The engagement required algorithm development across Python-based ML infrastructure, database design, and UI implementation – all delivered under the guidance of Selux’s Director of Software and Head of Algorithm and Data Science.

Fintech

Financial development services were among the first industries to adopt AI, and for good reason. The combination of high transaction volumes, structured data, and quantifiable outcomes creates near-ideal conditions for machine learning.

Real-time fraud detection models process millions of transactions per second, identifying patterns that rule-based systems miss while reducing the false positives that frustrate legitimate customers. Credit scoring models that incorporate alternative data sources expand access while improving accuracy. AML systems detect complex multi-hop transaction patterns indicative of money laundering at a scale no human team could monitor. NLP tools monitor earnings calls, regulatory filings, and news for material signals.

Blackthorn Vision case study: UK financial advisory firm

We built a client management system for a UK-based financial advisory company, combining robust client portfolio data handling with regulatory-grade audit trails and clean client-facing interfaces. The project reflects a pattern common across financial services: the requirement to pair AI-assisted analytics with compliance documentation and explainability standards that regulators and clients alike demand.

Oil & Gas

Oil and gas presents some of the most demanding AI deployment conditions: safety-critical environments, geographically distributed assets, and operational decisions where errors are costly and sometimes irreversible. Predictive maintenance AI analyses sensor data from pumps, compressors, and wellhead equipment to predict failures before they occur, reducing unplanned downtime that can cost hundreds of thousands of dollars per day.

Seismic data interpretation models assist geoscientists in identifying reservoir structures and drilling targets. Pipeline integrity systems detect anomalies in pressure and flow data long before they become safety incidents.

Retail & E-commerce

Retail AI operates across the full customer journey, and its ROI is among the most measurable of any sector. Personalised recommendation engines drive 15-35% of revenue at mature e-commerce platforms. Demand forecasting models reduce overstock and out-of-stock events simultaneously by predicting SKU-level demand, accounting for seasonality, promotions, and local signals that manual planners cannot model at scale.

Dynamic pricing engines adjust in real time based on competitor rates, inventory levels, and demand elasticity. Computer vision monitors shelf compliance and triggers restocking alerts. The combination of high transaction volumes, rich behavioural data, and directly measurable conversion metrics makes retail one of the clearest environments for demonstrating AI ROI.

Read also: AI and machine learning in retail: What’s working, what’s next, and how to implement it

Logistics

Logistics AI addresses the core challenge of moving goods reliably across complex supply chains under persistent uncertainty. Route optimisation algorithms reduce fuel consumption and delivery time simultaneously. Warehouse data AI orchestrates picking, packing, and storage allocation across fulfillment centres, reducing pick errors and adapting dynamically to changing order profiles. Supply chain risk AI monitors supplier health, geopolitical signals, and commodity prices to anticipate disruptions weeks in advance, giving procurement teams time to pre-position inventory or activate alternatives. Document AI automates the processing of bills of lading, customs declarations, and freight invoices – variable-format documents that currently consume significant manual time at every node of the supply chain.

Travel and Hospitality

The travel and hospitality industry faces one of the most volatile demand environments in any industry, making AI-driven forecasting and pricing particularly valuable. Revenue management AI continuously adjusts room rates, airfares, and package prices based on booking pace, competitive rates, and demand forecasts, optimising yield across hundreds of rate categories simultaneously.

Demand forecasting gives operators advance visibility into occupancy trends, enabling earlier and more accurate staffing and procurement decisions. Personalised itinerary and recommendation tools, sentiment analysis on guest feedback, and AI-assisted concierge services represent the next layer of value being built on top of operational AI foundations.

Biotech

AI is accelerating drug discovery and development in ways that are changing the economics of bringing new therapies to patients. Protein structure prediction and molecular property models screen candidate compounds in silico, dramatically narrowing the chemical space experimental teams need to explore. Multi-omics data platforms integrate genomic, transcriptomic, and proteomic data to identify disease mechanisms and therapeutic targets that individual data modalities cannot reveal. Clinical trial matching AI connects eligible patients to relevant studies faster and more accurately than manual review.

AI development lifecycle

AI development is fundamentally different from conventional software delivery. Traditional software executes logic that engineers write; AI systems learn behaviour from data. That distinction has real consequences for how projects are scoped, executed, and measured, and it demands a methodology designed around the probabilistic nature of model outputs and the central role of data quality throughout.

Step 1: Discovery and data audit

Every AI project begins with a structured assessment of the problem and the data. Engineers and data scientists work with domain experts to develop a precise problem formulation: what exactly should the model do, what does success look like, and what data is available to train it?

The data audit examines every relevant source: volume, quality, completeness, labelling status, and access controls. This phase consistently surfaces issues that would derail development later, such as gaps in data quality, siloed sources that require integration work, and privacy constraints that require anonymisation.

Step 2: Prototyping and PoC

Rather than committing to full development before validating the core technical thesis, professional teams build a constrained prototype. Typically, it’s ready within four to eight weeks and is based on a representative sample of real data, simplified infrastructure, and a reduced feature set. The goal is to check whether the model meets the required performance threshold. If it can, the PoC provides evidence to justify full investment. If it cannot, the project either redirects or stops.

Step 3: Model development and training

With the PoC validated, full model development begins. This phase involves systematic experimentation across architectures and training strategies, feature engineering, hyperparameter optimisation, and rigorous evaluation on held-out test sets and adversarial cases. For generative AI applications, it includes selecting and configuring the foundation model, designing the retrieval architecture, implementing guardrails and safety filters, and fine-tuning on domain-specific data where baseline performance is insufficient. Every decision is documented for reproducibility and future audit.

Step 4: AI solutions development

This phase wraps the trained model in production-grade software: REST or gRPC APIs, authentication, input validation, caching, CI/CD pipelines for safe model updates, and connectors to the upstream data systems and downstream applications that the model serves. Deployment environments are configured for the specific demands of enterprise AI – horizontal scaling, geographic distribution for latency-sensitive workloads, and data isolation for sensitive applications. Load testing and security review validate behaviour under production conditions before go-live.

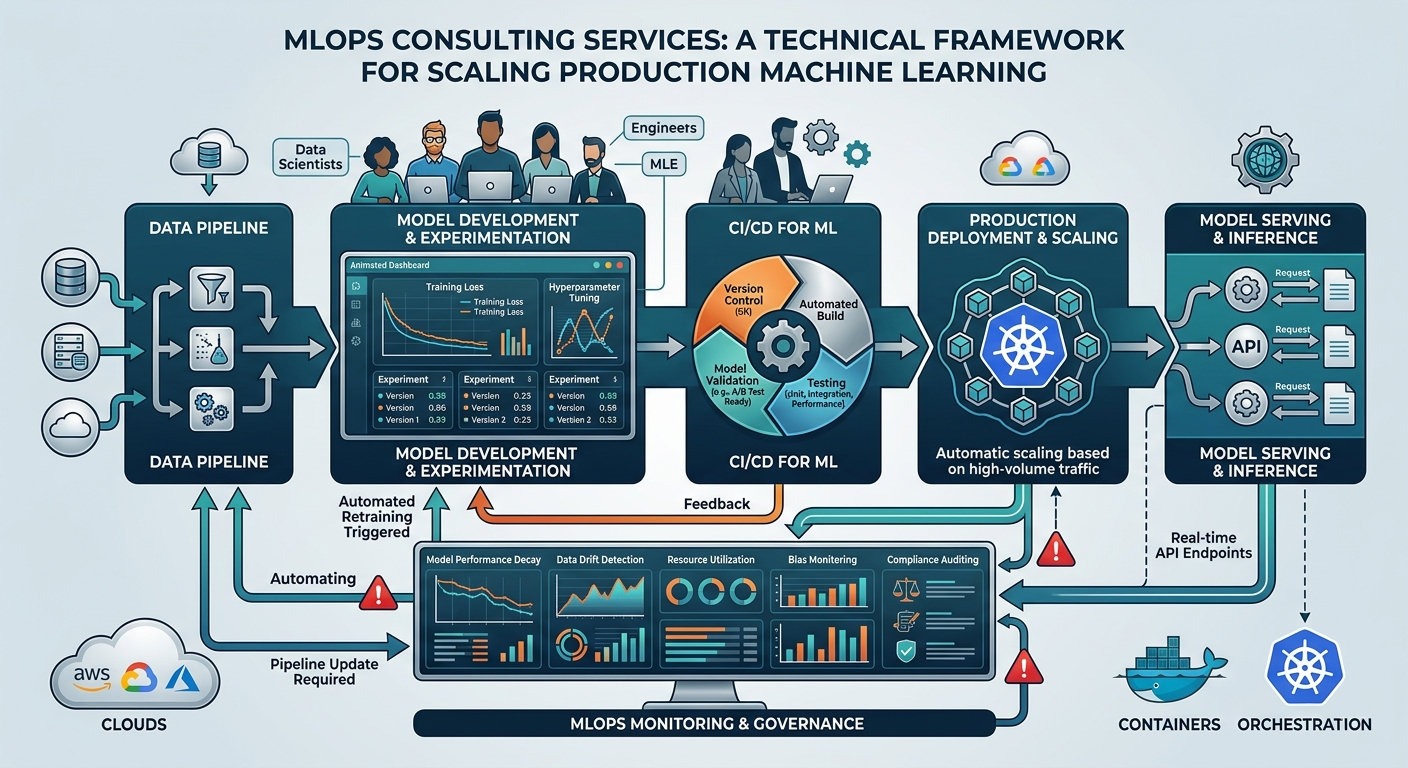

Step 5: MLOps and LLMOps

As the real world changes, customer behaviour shifts, products evolve, and language patterns update, model performance degrades. MLOps is the operational discipline that keeps deployed systems performing well over time:

- Continuous monitoring of data drift, prediction drift, and business KPI trends;

- Automated alerting when metrics breach thresholds;

- Scheduled or triggered retraining pipelines;

- Version management and rollback capability.

For LLM-based systems, LLMOps adds hallucination rate monitoring, prompt version management, and output quality evaluation pipelines to the stack.

What technical stack is involved in AI software development services

The tools a development team works with are a meaningful signal of their technical currency and delivery capability. The AI technology landscape evolves faster than almost any other area of software. Teams working with outdated tooling produce systems that are harder to maintain and less capable than those built with current practice. Let’s look at the frameworks, ecosystems, and databases.

Frameworks: PyTorch, TensorFlow, Keras, JAX

Deep learning frameworks are the foundational libraries on which models are built and trained. PyTorch has become the de facto standard for both research and production development. Its dynamic computation graph, intuitive Python interface, and rich ecosystem (including Hugging Face’s Transformers library) make it the starting point for most modern model work.

TensorFlow remains widely deployed, particularly in organisations with established ML infrastructure. TensorFlow Serving and TensorFlow Lite offer strong production serving and edge deployment capabilities, respectively.

Keras provides a clean high-level API that now runs across PyTorch, TensorFlow, and JAX backends, accelerating standard model development for teams that want to move fast without managing low-level details.

JAX, developed by Google Research, is the framework of choice for frontier large-scale training – its hardware-accelerated numerical computing and XLA compiler backend deliver exceptional efficiency on GPU and TPU hardware, and it is the foundation of Google’s own large model development, including Gemini.

Read also: PyTorch vs TensorFlow: What’s the difference and which one wins

LLM ecosystem: OpenAI, Anthropic, Google Gemini, Llama 3, Mistral

There is no single large language model that leads in every dimension. Mature engineering teams select models based on the specific requirements of each use case rather than defaulting to a single provider.

OpenAI’s GPT-4o and o-series lead on general-purpose capability and developer ecosystem maturity. The O-series reasoning models deliver particularly strong performance on complex analytical tasks.

Anthropic’s Claude is consistently preferred for tasks requiring nuanced instruction-following, long-context document processing (up to 200,000 tokens), and applications where output safety and alignment standards are vital. The latter makes it a natural fit for regulated industries and sensitive enterprise deployments.

Google Gemini offers native multi-modal reasoning across text, images, audio, and video, and integrates tightly with Google Cloud infrastructure.

Meta’s Llama 3 and Mistral are the leading open-weight alternatives. Their publicly released model weights can be deployed entirely within an organisation’s own infrastructure, eliminating data residency concerns and per-token API costs at scale. Mistral’s mixture-of-experts architecture delivers strong performance at a lower compute cost than equivalent dense models.

Cloud and infrastructure: AWS, Azure, Google Cloud

AWS SageMaker is the most widely adopted managed ML platform. It covers the full lifecycle from data preparation and experiment tracking through distributed training, model hosting with auto-scaling endpoints, and production drift monitoring. Its deep integration with the broader AWS ecosystem makes it the best choice for organisations that have standardised on AWS.

Azure AI Studio provides enterprise-grade access to OpenAI models within Azure’s security and compliance perimeter, satisfying data residency requirements that the consumer OpenAI API cannot. It integrates tightly with Azure Active Directory and Microsoft’s compliance frameworks (FedRAMP, HIPAA), and its prompt flow tooling streamlines LLM application development.

Google Cloud Vertex AI is the unified MLOps platform for organisations in the Google ecosystem, offering native access to Gemini and other Google models, TPU hardware for large-scale training, and tight integration with BigQuery for data-intensive applications.

Vector Databases: Pinecone, Milvus, Weaviate, Chroma

Vector databases store data as high-dimensional numerical representations – embeddings that capture semantic meaning – and retrieve the most conceptually similar records to a query at millisecond speed. They are the foundational infrastructure layer of RAG architectures, semantic search, and recommendation systems: any application that needs to find the most relevant content in a large corpus based on meaning rather than keyword matching.

Pinecone is the leading fully managed service, optimised for production scale with transparent infrastructure management and hybrid search (vector similarity combined with metadata filters).

Milvus is the most capable open-source option for organisations requiring self-hosted deployment, supporting multiple index types and GPU-accelerated search.

Weaviate combines vector search with a schema-aware object model and native support for generative search pipelines.

Chroma is lightweight, Python-native, and the fastest path from concept to a working RAG prototype. Teams typically migrate to Pinecone or Milvus at production scale.

Orchestration: LangChain, LlamaIndex, Haystack

Modern AI applications chain multiple LLM calls together, retrieving context, invoking tools, routing between models, and synthesising outputs in sequences that can branch and adapt based on intermediate results. Orchestration frameworks provide the scaffolding for these workflows.

LangChain is the most widely adopted, offering extensive integrations across LLM providers, vector databases, tools, and data sources, along with abstractions for agents, memory, and chains. Its companion platform, LangSmith, provides observability and evaluation in production.

LlamaIndex specialises in the data connectivity and retrieval layer – sophisticated document parsing, chunking, indexing, and advanced retrieval techniques including sub-question decomposition and re-ranking. It’s the preferred choice for knowledge-intensive RAG applications.

Haystack is a production-focused framework with a strongly modular pipeline architecture and a built-in evaluation framework covering retrieval quality, answer faithfulness, and contextual precision – well-suited to teams prioritising rigorous quality measurement alongside delivery.

Ethics, security, and compliance

Security and ethics are not abstract questions or a moral aspect of working with AI. They determine whether a deployment is legally permissible, organisationally sustainable, and commercially viable. The consequences of getting this wrong range from regulatory fines and reputational damage to genuine harm to users and third parties. Responsible AI partners treat these dimensions as first-class engineering concerns.

Data privacy concern

AI systems ingest, process, and often retain sensitive data. Privacy-by-design principles such as data minimisation, purpose limitation, retention policies, and consent management must be embedded in the architecture. Training data requires de-identification and anonymisation pipelines to remove personally identifiable information before it enters the model.

Production inference must handle inputs according to the organisation’s data classification policy, with appropriate encryption in transit and at rest. Compliance with GDPR, CCPA, HIPAA, and sector-specific regulations is verified through legal review at the design stage, not assumed during deployment.

Ethical concern

Ethical AI describes systems that are fair, accountable, and transparent about their limitations. Bias audits examine whether model outputs systematically disadvantage protected groups, which is a critical issue in lending, hiring, medical triage, and other high-stakes decisions.

Explainability work produces methods for communicating why a model reached a specific output, satisfying both internal governance requirements and regulations like the EU AI Act, which mandates human-readable explanations for automated decisions in high-risk domains. Output safety filters prevent models from generating harmful or inappropriate content. Human-in-the-loop review preserves oversight for decisions where the cost of error is significant enough to warrant it.

Security concern

AI systems face both conventional security threats and attack vectors specific to ML. Conventional threats (unauthorised access, data exfiltration, injection attacks) are addressed through standard security engineering. AI-specific risks require additional defences:

- Prompt injection attacks where malicious inputs manipulate model behaviour require input sanitisation and instruction hierarchy enforcement;

- Model extraction attacks where adversaries reconstruct proprietary models through API queries) require rate limiting and output perturbation;

- Adversarial examples designed to fool vision and classification models require adversarial training and robust input validation.

Production AI systems should undergo AI-specific penetration testing before launch and regular red-teaming thereafter.

Read also: AI data governance: Everything technology leaders need to know

Sovereign AI software development services

Sovereign AI refers to a nation’s or organization’s capability to develop, own, and control its own AI infrastructure, data, and models. Naturally, transmitting data to external cloud providers or third-party AI APIs may be legally or operationally impermissible for them.

Sovereign AI implementations run entirely within the organisation’s own infrastructure – on-premise, private cloud, or dedicated government cloud environments. This includes private deployment of open-weight models such as Llama 3 and Mistral, air-gapped training pipelines, and self-hosted inference infrastructure. The cost premium is justified when the alternative – data residency violation, loss of classified information, or failure of regulatory approval – is unacceptable.

Regulatory context: The EU AI Act is the world’s first comprehensive, risk-based artificial intelligence law, setting mandatory safety and transparency standards for AI systems in the EU. It bans prohibited practices, mandates strict compliance for high-risk systems, and sets transparency requirements for generative AI.

Pricing and engagement models

AI development investments range from focused PoC experiments in the tens of thousands to multi-year enterprise programmes in the millions. Having a realistic understanding of its resources and needs helps organizations to choose the best-suited engagement model and reach maximum ROI. The most common engagement models are:

- PoC / MVP → Fixed price

Defined scope, defined deliverables, bounded risk. Typically $30K-$150K over 6-12 weeks. The right starting point for technically uncertain use cases before full development is committed.

- Dedicated AI Team → Time & Material

A composed team of ML engineers, data scientists, and architects embedded in your programme and billed at agreed rates. Maximum flexibility as requirements evolve. The standard model for complex, multi-phase engagements.

- AI Support & Optimisation → Retainer (monthly fee)

Monthly commitment for continuous model monitoring, retraining, performance tuning, and technical support. Ensures deployed systems remain accurate and reliable as data and requirements change.

How to measure success?

Without pre-agreed KPIs, neither party can objectively determine whether the investment delivered value. Technical metrics – accuracy, precision, recall, and latency – measure whether the model works. Business KPIs – cost per transaction, revenue lift, reduction in false positives, improvement in resolution time – measure whether it matters. The most credible AI programmes establish both types at the outset, baseline them before deployment, and report against them at regular intervals after go-live.

Why and how to choose a professional AI partner

Many organisations weigh whether to build internal AI capability instead of engaging an external partner. In-house development has its perks: control, institutional knowledge accumulation, and long-term independence from vendors. But for most organisations, particularly those making their first significant AI investments, a specialist partner offering AI software development services delivers faster, more reliable results. Here is why.

Access to a broader talent pool

The global supply of engineers who have actually designed, trained, deployed, and operated production AI systems at scale is not that big. Recruiting a senior ML engineer typically takes three to six months and commands compensation reflecting demand that significantly exceeds supply.

Assembling a team capable of delivering sophisticated Artificial Intelligence development services might require months of sustained, expensive recruitment effort. A specialist partner, in turn, provides immediate access to pre-assembled teams with the depth and breadth of experience that production AI demands, including the pattern recognition that comes from having solved similar problems before for other organisations or in other industries.

Faster time-to-market

A partner with relevant domain experience and a library of reusable components for AI/ML development services can significantly compress delivery timelines compared to an organisation building the same capability from scratch. This acceleration comes from accumulated domain knowledge that eliminates ramp-up time, pre-built accelerators that avoid rebuilding common patterns, established toolchains, and the ability to anticipate and prevent the failure modes that routinely slow down first-time AI programmes.

Future-proofing your AI solution

The AI scenery changes faster than most organisations can track. Frameworks evolve and regulatory requirements shift several times a year. A specialist partner invests continuously in staying up-to-date, evaluating emerging tools, tracking research developments, and refreshing architectural recommendations as the field moves.

This protects client organisations from the burden of maintaining currency in a domain that moves too fast for most IT functions to follow, while ensuring that production systems are built on architectures that can adapt to change rather than becoming expensive legacy constraints.

The right AI software developers bring the experience to challenge your assumptions, sharpen your problem formulation, and protect your investment from the mistakes that could have been avoided.

Conclusion

AI software development services is a capability that organisations appreciate immediately and grow to cherish over time. They require a sequence of investments and cooperation with partners who understand both the technology and the business context in which it has to operate.

Blackthorn Vision has been delivering custom AI software development solutions for years, with deep expertise across healthcare, fintech, energy, biotech, and enterprise technology. If you are evaluating an AI initiative, whether it is a first proof of concept or a production-scale program, we would welcome the conversation and partnership.

FAQ

What is the typical ROI timeline for Artificial Intelligence solutions implementation?

Can a custom AI solution be integrated into legacy software and ERP systems?

Yes. Modern SaaS ERPs and CRMs with REST APIs connect relatively straightforwardly. Older on-premise systems with proprietary data formats or no API layer require more creative approaches: middleware integration layers, RPA bots that interface with legacy UIs, event-driven architectures extracting data via database replication, or custom ETL pipelines synchronising data to a modern store accessible to the AI system.

Who owns the Intellectual Property (IP) and the custom-trained weights of the final model?

IP ownership is governed by contract. In well-structured professional engagements, the client owns all custom work product – fine-tuned model weights, proprietary training datasets, application code, and integration architecture – while the development partner retains ownership of their pre-existing tools, frameworks, and general-purpose components.

How do you mitigate AI hallucinations?

Hallucination management requires layered approaches: Retrieval-Augmented Generation grounds outputs in verified documents retrieved at query time; output validation layers; confidence scoring that flags low-certainty outputs for human review; fine-tuning on high-quality domain content; and human review.

What is the difference between a PoC and an MVP in AI?

A PoC answers a technical question: can AI solve this problem with the available data at the required performance level? It is typically internal, not user-facing, and its audience is the team and decision-makers who need evidence before approving full investment. An MVP answers a different question: will real users adopt and benefit from this AI feature? It is the smallest production-ready version that can be put in front of actual users to generate genuine usage data and feedback.

What specific data infrastructure is required before we can begin AI development?

The minimum requirement is accessible, structured data storage along with sufficient data volume and quality for the intended task, some mechanism for versioning and lineage tracking, and programmatic access to the systems that will feed data to the AI in production.

How does custom AI development differ from using off-the-shelf AI software development solutions like ChatGPT or Claude?

General-purpose AI products work reasonably well across a range of tasks but are not optimised for any specific one. Custom AI software is trained on your data and integrated with your systems, enforcing your business rules, and monitored against your KPIs. A custom system understands your products, your customers, and your processes in a way that a general-purpose model cannot, and it connects to the systems that need to act on its outputs.