Gemini 3.1 Pro leads on GPQA at 94.3% versus GPT-5.5’s 93.6% – a narrow edge. On abstract reasoning benchmarks like ARC-AGI-2, the positions flip: GPT-5.5 scores 85.0% versus Gemini 3.1 Pro’s 77.1%. GPQA measures depth of scientific reasoning; ARC-AGI-2 measures generalization to genuinely new problems. Which one matters depends entirely on your use case.

AI titan clash: Gemini vs. ChatGPT

20 minutes read

20 minutes read

Content

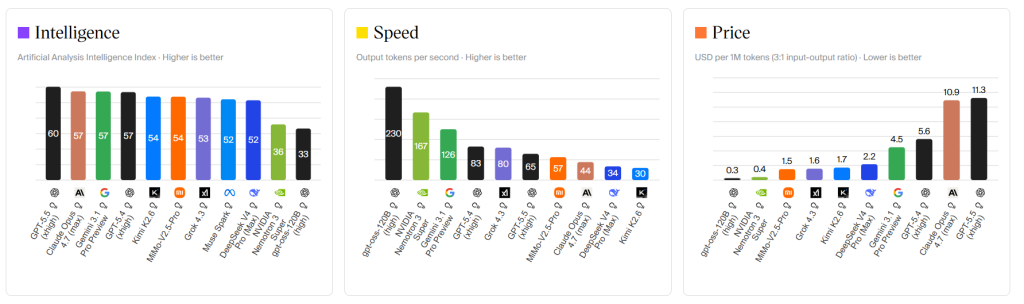

The debate has been going on for three years now – Gemini versus ChatGPT, Google versus OpenAI. As of April 2026, the two flagship models are GPT-5.5, released by OpenAI on April 23, and Gemini 3.1 Pro, released by Google on February 19. GPT-5.5 leads the Artificial Analysis Intelligence Index with a score of 60, while Gemini 3.1 Pro sits at 57. The gap is incredibly narrow, but it disappears entirely when we start naming specific tasks.

This dependency is the question we answer in this article: which one should you choose for your specific tasks?

What makes Gemini and ChatGPT fundamentally different?

These two companies have built their AI and ML products around entirely different ideas of what an AI assistant should be – and those ideas shape everything, from architecture to pricing to the kind of tasks each platform is genuinely good at.

Why OpenAI and Google are not actually competing for the same user

OpenAI builds GPT-5.5 as one unified reasoning system, designed to think carefully, act autonomously across multi-step tasks, and handle complex work with minimal supervision. It is a model designed to do work autonomously – calling tools, maintaining state across long tasks, and recovering from errors without human intervention.

The clearest example of this in practice: on GDPval, which tests agents producing real knowledge work across 44 occupations, GPT-5.5 scores 84.9%, covering tasks from sales presentations and accounting spreadsheets to clinical scheduling and engineering diagrams.

Google’s belief runs in the opposite direction. Gemini isn’t trying to be the most autonomous model. It’s trying to show up everywhere you already work. Gmail, Docs, Drive, Search, Maps, Meet – each one a place where Gemini activates without you switching tools or copying text between windows.

A marketing manager drafting a campaign brief in Google Docs can ask Gemini to pull competitor data from Search, reference a past proposal from Drive, and rewrite the brief in a new tone without leaving the document. That’s not a feature, but a different relationship with software entirely.

Building a product that needs to integrate AI?

Whether Gemini, GPT-5.5, or both – let’s talk about your stack.

How each model actually processes information

The biggest technical difference between Chat GPT and Gemini isn’t processing speed or benchmark scores, but how each model handles inputs that aren’t plain text. That architectural choice ripples into dozens of real-world use cases where one platform is simply better equipped than the other.

Why Gemini handles video, audio, and images in one pass

Gemini 3.1 Pro was trained on text, images, audio, and video all at once, not through separate models bolted together. When you send it a video, it doesn’t split the job into “extract frames” and “transcribe audio” as separate steps. It handles all of it together, which means less time wasted and less context lost between steps.

Here’s what that looks like in practice. A UX researcher uploads a 20-minute recording of a user testing session. Instead of manually timestamping every moment where the user hesitated or made an error, they ask Gemini to identify all such points from the video, with audio context included. Gemini returns a structured list with timestamps and observations. A modular system would have to stitch outputs from separate video, audio, and text models together, which introduces both delay and missed nuance. Gemini handles it natively, in one pass.

On video analysis tasks, Gemini 3.1 Pro is roughly 95% faster than GPT-5.5, and that’s before you account for the fact that GPT-5.5 still can’t process video at all natively.

Why GPT-5.5 reasons deeper on complex problems

GPT-5.5 can’t process video natively. What it does instead is reason through complex, multi-step problems with remarkable depth and, crucially, act on that reasoning autonomously. Its thinking mode produces clean, auditable logic chains, while its agentic capabilities mean it doesn’t just suggest what to do next; it does it.

Let’s imagine a situation where a financial analyst needs to track portfolio performance across five different broker portals, compile the data, and build a weekly report in a spreadsheet. Manually, that’s two hours every Friday. With GPT-5.5’s computer use, the model opens each portal, extracts the data, builds the spreadsheet, and flags anomalies while the analyst reviews and approves each step. On OSWorld-Verified, which measures autonomous desktop operation, GPT-5.5 scores 78.7% – meaningfully above the human baseline.

Context window: Which AI can handle more data at once?

One spec that genuinely changes what you can do, not just how fast you can do it: Gemini 3.1 Pro accepts 1,048,576 input tokens while GPT-5.5 supports 1,000,000 tokens – both effectively at the 1M ceiling. On paper, parity. In practice, Gemini holds a 2.5x advantage on usable long-context in independent testing, with quality holding significantly better past 500K tokens.

What does a million tokens actually mean? Roughly 750,000 words – the equivalent of seven or eight full novels, a year of email threads, or a mid-sized software codebase with all its documentation. A law firm reviewing a merger deal might have contracts, amendments, correspondence, and filings totaling hundreds of thousands of words. Feeding all of it into Gemini in a single session and asking it to flag inconsistencies is something GPT-5.5 can attempt, but Gemini does it more reliably at that scale.

On the output side, GPT-5.5 generates up to 128,000 tokens per response, while Gemini 3.1 Pro is limited to 65,536. When you need a complete, exhaustive document in one run – a full technical spec, a long-form legal brief — GPT-5.5’s output ceiling becomes the advantage.

Gemini 3.1 Pro vs. GPT-5.5: Benchmark scores explained

Benchmarks can mislead as easily as they inform. A model that tops a leaderboard on abstract math may still give you a hallucinated statistic in a business report. The numbers below come from llm-stats.com and are sourced from April 2026 data. Each one comes with an explanation of what it means for actual work.

Overall intelligence: Where the two models are tied

GPT-5.5 leads the Artificial Analysis Intelligence Index at 60, with Gemini 3.1 Pro at 57. That three-point gap is the headline number, but the index is a composite of ten evaluations, and the models split the categories sharply. GPT-5.5 wins on agentic tasks and autonomous tool use. Gemini 3.1 Pro wins on multimodal and grounded reasoning. This nuance is more important than the headline score itself.

Which AI wins on reasoning benchmarks?

Gemini 3.1 Pro slightly outperforms GPT-5.5 on GPQA (94.3% vs 93.6%), while GPT-5.5 leads on ARC-AGI v2 (85.0% vs 77.1%), Humanity’s Last Exam (52.2% vs 51.4%), and MCP Atlas (75.3% vs 69.2%).

GPQA Diamond tests PhD-level scientific reasoning – questions designed so that googling won’t help. Scoring 94.3% means reasoning from first principles on genuinely hard problems. That’s where Gemini edges ahead. ARC-AGI-2 tests abstract reasoning on completely novel problems – the kind that require genuine generalization, not memorized patterns.

GPT-5.5’s 85% vs Gemini’s 77.1% is the largest single gap between the two models, which helps explain why GPT-5.5 averages 85 on reasoning benchmarks while Gemini 3.1 Pro averages 77.1 in that category.

ChatGPT vs. Gemini for coding: Who writes better code?

This is where the practical difference becomes most consequential — and where the context of how you code matters more than the raw numbers.

GPT-5.5 leads on SWE-Bench Pro at 58.6% versus Gemini 3.1 Pro’s 54.2%, and on Terminal-Bench 2.0 at 82.7% versus 68.5%. SWE-Bench Pro uses real GitHub issues from production codebases, not synthetic puzzles. Scoring 58.6% means successfully fixing roughly six out of ten real bugs. Terminal-Bench measures command-line planning and iteration – exactly the kind of agentic coding work where GPT-5.5’s autonomous capabilities compound.

For developers using GitHub Copilot, the GPT-5.5 integration creates a tight loop between model capability and IDE tooling. A senior engineer gets model suggestions inline, without context-switching. That integration advantage doesn’t show up in any benchmark.

Where Gemini closes the gap: code tasks that involve other media. A developer trying to reproduce a UI bug captured in a screen recording can feed the video directly to Gemini and ask it to explain what’s failing. A data analyst working with a scanned report full of tables can have Gemini extract the numbers and write the analysis. These are cases where GPT-5.5’s inability to process video becomes a real problem.

Integrating AI coding tools into your pipeline?

We build custom AI-enabled development environments and agentic workflows on top of GPT-5.5, Gemini, and Claude.

AI-generated video, images, and audio: Google vs. OpenAI

This is the most one-sided section of the entire comparison. Google has built a full creative media stack – video, image, music – that OpenAI hasn’t matched. The gap is wide enough that, for creative professionals, this section alone may decide the question.

Google Veo 3: The only AI that generates video with native audio

Veo 3 generates not just video but synchronized audio – dialogue, sound effects, and ambient noise — all from a single text prompt. That’s the real breakthrough. Every previous AI video tool produced silent clips that required audio to be added in post-production. Veo 3 removes that step entirely.

A small marketing agency producing social content for a product launch used to need: a video prompt, a silent clip download, separate audio generation, and editing time to sync them. With Veo 3, a single prompt like “a barista in a busy café preparing a cortado, espresso machine hissing, morning light through the window” returns a complete, synchronized clip. Klarna used Veo and Imagen on Vertex AI to transform time-intensive production processes into quick, efficient tasks – producing b-roll, YouTube bumpers, and social animations at a speed that wasn’t previously possible.

Veo 3.1, released in October 2025, added richer audio generation, better cinematic style control, and more consistent characters across multiple scenes, fixing the character drift problem that made earlier AI videos unusable for narrative work. GPT-5.5 has no comparable native video generation capability.

Imagen 4: How Google’s image generator compares to DALL-E

Imagen 4 generates images up to 2K resolution with strong fine detail – fabrics, water droplets, animal fur – across photorealistic and abstract styles alike. Its most practically useful improvement is typography: signs, book covers, and packaging labels now render with correct spelling and consistent letterforms. That sounds minor until you’ve spent twenty minutes regenerating a hero image because the café sign in the background said “COFEE.”

A faster Imagen 4 variant runs up to ten times quicker than Imagen 3, making high-volume creative workflows viable. An e-commerce team generating hundreds of product variation images – different backgrounds, different lighting, different seasonal themes – can iterate in minutes instead of hours. GPT-5.5’s image generation through DALL-E remains competitive for illustration and concept art, but on photorealism and text accuracy, Imagen 4 is clearly ahead.

AI music generation: Why Google Lyria has no OpenAI rival

Lyria 2, Google’s music generation model, is generally available on Vertex AI and produces high-quality music across many styles. A content creator building a documentary-style video essay can generate a custom score that fits the exact mood and pacing – without stock music licenses or a composer. GPT-5.5 has no direct consumer equivalent. For teams building multimedia content pipelines, that gap makes a big difference.

Gemini vs. ChatGPT: Which AI fits your existing tools?

Both platforms connect with the outside world, but in completely different ways, built for completely different users. More than any benchmark, your existing toolstack should drive this decision.

Gemini inside Google workspace: Gmail, Docs, Drive, and Meet

“Integration” in most AI comparisons means an API connection that someone configured. Gemini’s Workspace integration is something structurally different – the model runs natively inside the tools where your work already lives.

Here’s a concrete example. A project manager starts Monday morning in Gmail. They ask Gemini to summarize all client emails from the past week, flag anything that needs a reply today, and draft response templates for the three most urgent threads – from inside Gmail, without opening a separate AI window. Then they move to Google Docs, where Gemini pulls in notes from a Drive folder, the latest timeline from Sheets, and last week’s meeting transcript from Meet, and uses all of it to update the project status report. Gemini handles pulling email threads automatically, organizing Drive files, and summarizing calendar events – not because it’s smarter, but because it lives inside those surfaces and has permission to read them.

Agent Mode, available on desktop in 2025, lets Gemini handle multi-step tasks by combining web browsing, deep research, and Google app integrations with minimal oversight. For organizations already running on Workspace, this isn’t a feature comparison – it’s a different category of utility.

ChatGPT’s third-party integrations and computer use explained

ChatGPT’s ecosystem works differently. Instead of going deep in one suite, it connects broadly across many platforms. The GPT Store holds over three million Custom GPTs, covering legal document review, financial modelling, tax preparation, and most professional verticals. A compliance officer can build a Custom GPT trained on their company’s regulatory documents that answers internal questions based only on those materials. Integrations extend into Slack, Notion, HubSpot, Dropbox, and Canva.

The standout capability for GPT-5.5, though, is computer use, and it deserves a specific example. In Agent Mode, ChatGPT actively engages with websites, clicking, typing, and navigating to complete real-world tasks such as building financial models, comparing travel options, or creating executive dashboards.

A sales operations manager who normally spends two hours every Friday pulling data from three SaaS dashboards and manually building a pipeline report can give that task to GPT-5.5. The model opens the browser, logs in, extracts data, opens the spreadsheet, and builds the report.

On OSWorld-Verified, which measures autonomous desktop operation, GPT-5.5 scores 78.7%. That 21% failure rate still requires human oversight, but the tasks where it works reliably – CRM entry, invoice processing, pulling reports from SaaS portals without good APIs – are exactly the tasks that eat hours of manual work every week.

Gemini vs. ChatGPT on mobile: Which app works better?

Both apps are polished, and the difference comes down to ecosystem fit more than quality. Gemini Live lets you point your phone at something – a product label, a whiteboard, a restaurant menu in a foreign language – and ask about it in real time.

For example, a traveler in Tokyo holds up the menu. Gemini translates it and recommends dishes based on dietary preferences. A warehouse worker can hold a phone up to a shelf label and get instant inventory context.

ChatGPT’s mobile voice mode brings GPT-5.5’s reasoning into natural conversation with low delay. ChatGPT’s voice mode works across devices with smoother interruptions and a more natural back-and-forth; Gemini’s real-time voice is currently more mobile-focused and more restricted. On Android, Gemini’s OS-level presence gives it the edge in Google-first households. On iOS, the gap is smaller.

ChatGPT vs. Gemini pricing: Which AI is worth the money?

At first glance, both platforms look similar at the $20 consumer tier. Look closer, and the differences, particularly at the API level, become more significant.

GPT-5.5 vs. Gemini 3.1 Pro: Full pricing breakdown (April 2026)

| ChatGPT (OpenAI) | Gemini (Google) | |

| Current flagship model | GPT-5.5 (Apr 23, 2026) | Gemini 3.1 Pro (Feb 19, 2026) |

| Free tier | GPT-5.3 (with ads) | Gemini 3 Flash |

| Standard paid | ChatGPT Plus — $20/mo | Google AI Pro — $19.99/mo |

| Standard extras | Custom GPTs, DALL-E, Deep Research (10/mo) | 2TB storage, Workspace integration, Imagen 4, Deep Research (unlimited) |

| Premium tier | ChatGPT Pro — $200/mo | Google AI Ultra — $249.99/mo |

| Premium extras | GPT-5.5 Pro, unlimited reasoning | Veo 3, highest rate limits, YouTube Premium |

| API input (per 1M tokens) | $5.00 | $2.50 |

| API output (per 1M tokens) | $30.00 | $15.00 |

| Context window (input) | 1,000,000 tokens | 1,048,576 tokens |

| Context window (output) | 128,000 tokens | 65,536 tokens |

| Knowledge cutoff | Not specified | January 2025 |

Source: llm-stats.com, April 27, 2026

The API pricing gap is the biggest change from the previous generation. Gemini 3.1 Pro costs $2.50 input and $15.00 output per million tokens, while GPT-5.5 costs $5.00 input and $30.00 output – exactly twice as expensive. For developers running high-volume workloads, that 2x gap is significant.

A startup pushing 50 million output tokens a month pays $750 more per month with OpenAI. Over a year, that’s $9,000 in additional infrastructure costs. Even accounting for GPT-5.5’s roughly 40% token efficiency advantage on Codex tasks, Gemini 3.1 Pro is typically 2-3x cheaper on real workloads. When both models are capable enough for a job, Gemini’s price becomes a structural argument.

The consumer tier story is different. Google AI Pro bundles 2TB of cloud storage – which costs $9.99/month as a standalone Google One plan – effectively bringing the model access cost down to around $10/month. If you’re already paying for Google One, upgrading to AI Pro is close to a free decision. ChatGPT Plus at $20 gives you GPT-5.5 access, Custom GPTs, DALL-E, computer use, and Deep Research, but Deep Research is capped at 10 reports per month. Gemini’s Deep Research has no hard monthly limit, which makes a real difference for researchers and analysts who run sessions multiple times a week.

The premium tiers are hard to justify for most users. Google AI Ultra at $249.99/month makes sense for video production teams where Veo 3 access alone justifies the cost. ChatGPT Pro at $200/month makes sense only if you’re consistently hitting Plus rate limits, which takes genuinely heavy daily usage.

Not sure which AI model fits your budget?

We help companies select, integrate, and optimize the right AI infrastructure for their product, from model selection to MLOps pipelines.

How reliable are Gemini and ChatGPT? Hallucinations, privacy, and trust

Neither platform is safe to use without critical judgment. But they fail in different ways, and knowing which type of error costs you more is more useful than any aggregate safety score.

How each AI grounds its answers in real sources

The two models fail differently, and that difference is predictable. Gemini’s default connection to Google Search means its answers about current events are tied to live sources. Ask Gemini about this week’s interest rate decision, and it queries the live web as part of the answer. Ask GPT-5.5 the same question without its browsing tool enabled, and you may get confident-sounding information from its training data, which has no specified cutoff date.

On the flip side, ask both models to reason through a complex regulatory framework that hasn’t changed in years. GPT-5.5’s structured chain-of-thought produces a more organized, step-by-step explanation that’s easier to verify and act on. However, when it’s wrong, it’s often confidently wrong. In agentic contexts, that overconfidence can cause cascading errors: the model makes a bad decision at step three, doesn’t recognize it, and builds everything after on a flawed premise.

To protect yourself, you must adopt the following rule: if the answer could have changed in the past few months, trust Gemini more, but double-check both. If the answer requires careful multi-step reasoning from stable information, trust GPT-5.5 more, but still double-check both. Verify all sensitive and crucial information, regardless of which model you’re using.

ChatGPT vs. Gemini: Enterprise data privacy compared

For enterprise or regulated-industry use, this requires actual due diligence. OpenAI Enterprise provides zero data retention for API calls by default. Google’s Gemini Enterprise at $30 per user per month runs on Google Cloud’s compliance stack, including HIPAA-eligible configurations. Both have improved significantly since 2024. But teams in healthcare, legal, or finance need to validate their specific requirements against each vendor’s current data processing agreement. Don’t assume either is automatically compliant.

The regular users must understand that both platforms use non-premium conversations to improve their models by default, unless you actively opt out. That setting is buried in both interfaces, and most users have never changed it.

Do AI hallucination rates actually tell you anything useful?

Hallucination rates shift with every model update, vary enormously by task type, and mean nothing without context. In general, Gemini is more reliable on what’s currently happening; GPT is more reliable on how to reason through a complex, stable problem, but will occasionally do so with misplaced confidence. Both get things wrong – but different ones.

ChatGPT or Gemini: The right choice by use case

The choice comes down to where you work and what you build, not which model scored three points higher on an index you’ll never interact with directly. Here’s how it breaks down by role.

Marketers and content creators producing video, imagery, and multimedia campaigns should lean toward Gemini. Veo 3 for video, Imagen 4 for stills, Lyria 2 for audio, and unlimited Deep Research for campaign intelligence – it’s a complete creative stack at a sensible price.

Developers and engineers working in professional codebases with GitHub integration should lean toward ChatGPT. GPT-5.5’s SWE-Bench Pro lead, Copilot integration, and Terminal-Bench 2.0 dominance make it the stronger default for complex debugging and agentic coding pipelines. GPT-5.5 dominates agentic and autonomous workflows, which is the direction professional development tooling is moving.

Researchers and analysts dealing with long documents such as legal contracts, academic papers, regulatory filings, and financial reports should lean toward Gemini. The 1M token context window with better long-context quality past 500K tokens, combined with unlimited Deep Research, gives it a structural advantage for document-heavy work.

Operations and admin professionals who spend hours on repetitive digital tasks should evaluate GPT-5.5’s computer use seriously. CRM data entry, invoice processing, weekly report generation from multiple SaaS portals – Agent Mode can handle tasks like researching companies and building comparison spreadsheets, cleaning CSV data, and generating presentations from web research, in the time it takes to brief someone to do the same task manually.

Google Workspace users of any kind should default to Gemini. The ecosystem integration isn’t something ChatGPT can match with plugins or connectors, and the effective model cost after factoring in bundled storage makes it the obvious choice.

Whichever tool you decide to choose at the moment, this decision isn’t permanent. The most productive professionals in 2026 are not simply choosing between Gemini and ChatGPT; they decide which platform is their default, and when they switch.

Which AI wins in 2026? The honest answer

GPT-5.5 is the stronger model on most benchmarks right now, and its agentic capabilities are genuinely ahead of anything Google has built for autonomous task execution. But “stronger on benchmarks” and “better for your workflow” are different questions, and Gemini 3.1 Pro answers the second one more often than the first would suggest.

Google owns the creative media stack – video, image, audio – and the Workspace-embedded enterprise layer that can’t be replicated by any amount of API work. At half the API price of GPT-5.5, with a larger long-context advantage, and with the only native video processing in consumer AI, Gemini 3.1 Pro is the better default for a larger share of users than the benchmark gap implies.

OpenAI owns agentic depth, desktop automation, and the largest developer ecosystem. If your work involves autonomous multi-step tasks, complex reasoning pipelines, or professional development tooling, GPT-5.5 earns the premium.

Finally, don’t treat either platform as a chatbot. A chatbot answers questions. What both Gemini and ChatGPT have become, at their best, is a thinking layer that sits alongside your actual work. That changes how you prompt, how you integrate, and how much value you get out of the subscription you’re probably already paying for.

Now it’s time to build with AI

Blackthorn Vision delivers end-to-end AI software development, from custom GPT and Gemini integrations to full agentic systems.

FAQ

Is Gemini better than Chat GPT in reasoning benchmarks like GPQA and MMLU?

Which is better Gemini or Chat GPT at real-time web searching and fact-checking?

Gemini, for a structural reason: live web access through Google Search is its default behavior, not a tool that needs to be enabled. ChatGPT’s web search is capable but supplementary – triggered when needed rather than baked into every response. On BrowseComp, which tests web research accuracy, GPT-5.5 scores 90.1% versus Gemini 3.1 Pro’s 85.9% – a benchmark win for GPT-5.5. But in everyday use, Gemini’s search-grounded defaults mean you get sourced, current answers without having to ask for them explicitly. The benchmark and the user experience tell slightly different stories here.

How does the Thinking mode in the GPT-5 series differ from Google's Deep Research capabilities?

They solve different problems. GPT-5.5’s Thinking mode applies extended chain-of-thought reasoning to a single hard question – it takes longer to answer, but the logic is cleaner and more auditable step by step. Google’s Deep Research is a multi-step agentic workflow: it browses live sources, gathers citations, and produces a full structured report over several minutes. In a direct test, ChatGPT’s Deep Research found 18 sources to Gemini’s 47 – but ChatGPT relied more consistently on research-backed data, while Gemini sometimes drew incorrect conclusions despite the larger source count. Thinking mode is for depth on one hard question. Deep Research is for broad, sourced research synthesis. They’re complementary, not competing.

Is Google Gemini better than Chat GPT at writing, debugging, and deploying complex software architectures?

GPT-5.5 leads on SWE-Bench Pro (58.6% vs 54.2%) and Terminal-Bench 2.0 (82.7% vs 68.5%). It also integrates directly with GitHub Copilot. For teams in professional development pipelines with complex, multi-file codebases, GPT-5.5 is the stronger default. Gemini 3.1 Pro is competitive on isolated coding tasks and better when the work involves visual or multimodal context.

Can Gemini 3.1 Pro process a 2-hour video file more accurately than ChatGPT can analyze a 500-page PDF?

Gemini handles the video natively, with frame-by-frame analysis and full audio transcription in a single pass. GPT-5.5 handles long PDFs well but may need to chunk a dense 500-page document, losing the ability to reason across the full text at once. For video, GPT-5.5 has no native capability. Gemini wins this comparison clearly.

Is Gemini's native multimodality fundamentally faster than ChatGPT's modular approach?

Yes, in the scenarios where it applies. Processing all input types through one unified system removes the delay of converting between separate specialist models. On video analysis tasks specifically, Gemini 3.1 Pro is roughly 95% faster – and that’s before you account for the fact that GPT-5.5 has no native video processing capability at all.

Google Gemini vs Chat GPT: Which platform offers better data privacy protections for corporate and enterprise use?

Both offer solid enterprise compliance frameworks. OpenAI Enterprise gives zero-retention API access by default; Google Gemini Enterprise runs on Google Cloud’s stack with HIPAA-eligible configurations. The right choice depends on which cloud provider your organization has already audited. There’s no universal winner – match the vendor to your existing compliance posture.

How do the subscription models of OpenAI and Google compare in terms of value for money?

At the $20 consumer tier, Google AI Pro bundles Gemini 3.1 Pro with 2TB of storage and unlimited Deep Research. The storage alone costs $9.99/month, bringing the effective model cost to around $10/month. ChatGPT Plus gives you GPT-5.5 access, Custom GPTs, DALL-E, and computer use – but Deep Research is capped at 10 reports per month. At the API level, Gemini 3.1 Pro is exactly 2x cheaper than GPT-5.5 on both input and output tokens. For most users at the consumer tier, Google’s bundle wins on pure value. For developers at the API level, the cost argument for Gemini is even stronger.